90-Day Plan: Become an AI PM (starting from Zero)

If I Had to Start Over in 2026

If I lost every skill I have tomorrow and had 90 days to get hired as an AI PM, I would not watch a single YouTube video about prompt engineering for the first three weeks.

I would not open ChatGPT. I would not read a blog post about agents. I would not touch a no-code tool.

I would do something most PMs skip entirely.

I would learn how AI systems actually work before I try to build anything with them.

This sounds obvious. It is not what people do. What people do is jump straight to the shiny layer. They learn prompting. They learn vibe coding. They built a chatbot in an afternoon and updated their LinkedIn headline to “AI Product Manager.”

Then they sit in an interview. The interviewer asks how they would design evaluations for a RAG-based support system. They freeze.

They have no answer because they skipped the foundation that the answer sits on.

90 days is enough time. But only if you sequence things correctly.

Here is the exact sequence.

Weeks 1 to 3. The Foundation Nobody Wants to Build.

Most PMs hear about AI and start with Generative AI. This is backwards.

Generative AI is a layer that sits on top of machine learning. Machine learning sits on top of data systems. If you do not understand the layers below, you cannot reason about the layer above.

Week 1 is about machine learning at a PM level. Not math. Not code. Concepts.

What is supervised learning, & What is unsupervised learning?

What is the difference between a classification problem and a regression problem?

When your team says we trained a model, what does that actually mean?

What did they feed it? What did they optimise for? What could go wrong?

You are not learning this to become a data scientist.

You are learning this so you can sit in a design review and know whether the team picked the right approach.

If you cannot do that, you are not an AI PM. You are a project manager with a fancy title.

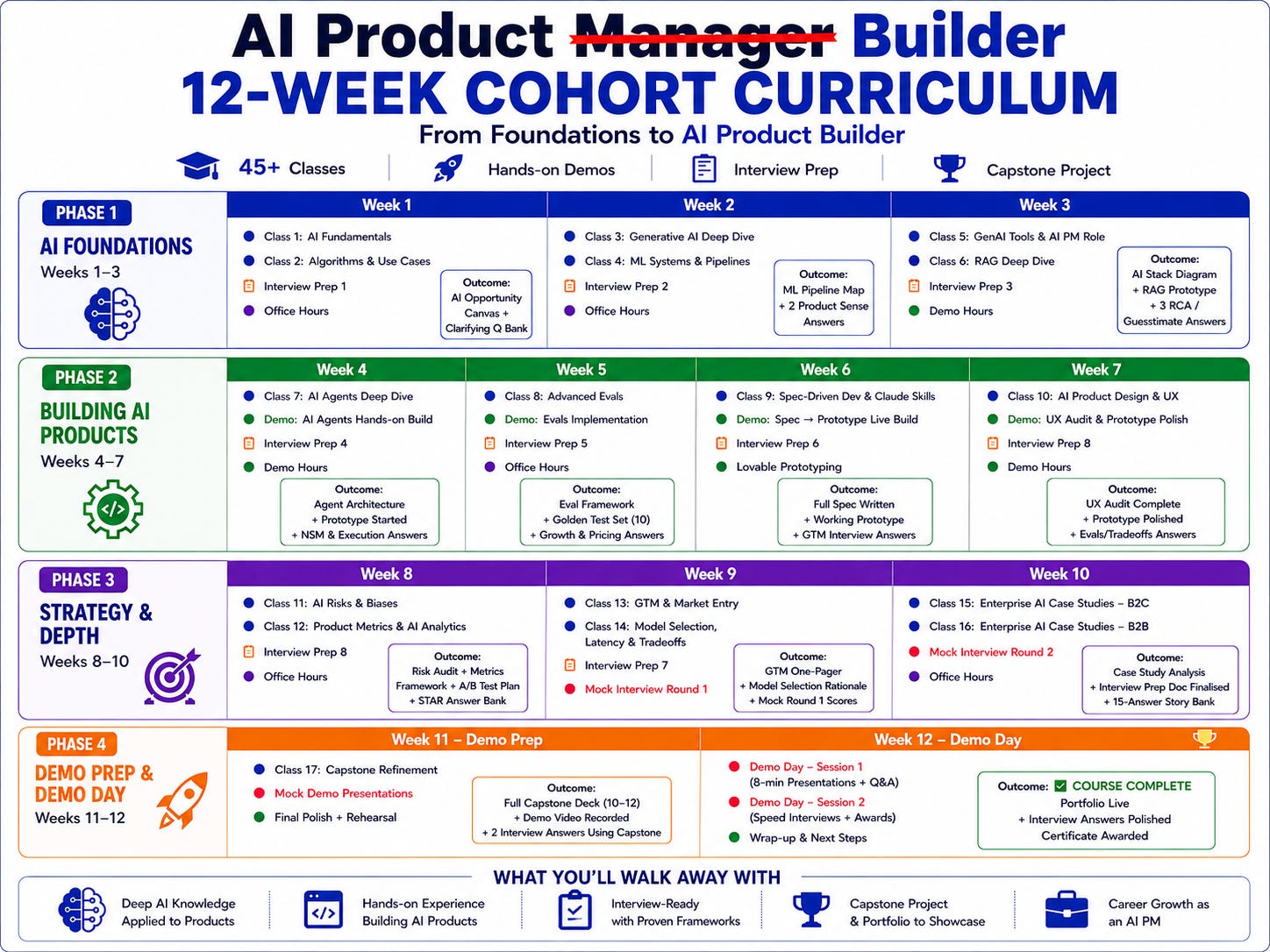

We are launching a 12-week Cohort ( 100 Hrs of Learning) and 10+ Live Projects. Please find the details here

Week 2 is about the AI Flywheel.

This is the concept that separates AI products from regular software.

In regular software, a feature works the same way on day 1 and day 1000.

In AI, the product should get smarter the more people use it. Users generate data. Data improves the model. The better model creates better user experiences. Better experiences bring more users.

If you cannot design this loop for your product, you do not have an AI strategy.

You have a feature with an API call.

Week 3 is about data pipelines.

This is the week that will feel the most boring and will be the most valuable.

Your AI is only as good as the data feeding it.

Dirty data. Biased data. Missing data. Poorly labelled data.

These are not engineering problems. These are product problems.

The PM who understands data pipelines catches issues in the design phase. The PM who does not catch them in production, after users have already had a bad experience.

By the end of Week 3, you should be able to whiteboard a basic ML system. Data in. Feature engineering. Model training. Prediction. Feedback loop. If you cannot draw this, you are not ready for what comes next.

Weeks 4 and 5. Algorithms and Case Studies.

You do not need to derive the math behind logistic regression.

You need to know when to use it.

Here is a real scenario.

Your team is deciding between a decision tree and a linear model for a pricing feature.

The engineer explains the trade-offs. If you do not understand what either approach does, you are sitting in that meeting as a spectator.

You are waiting for someone else to make a decision that is yours to make.

Week 4: learn the three algorithms that cover 80% of PM-relevant AI decisions.

Linear regression for predicting continuous values.

Logistic regression for classification.

Decision trees for complex, non-linear problems.

For each one, learn what it does, when it works, when it breaks, and what the output looks like.

Do not learn these from textbooks. Learn them from product case studies. How Uber uses prediction models for pricing.

How Netflix uses collaborative filtering for recommendations.

How Amazon designs the data flywheel behind Alexa.

Week 5 is entirely case studies. Read 10 to 15 real company teardowns.

How does Lyft balance model accuracy against latency in real-time pricing, and how does Amazon show the next best category to the users? How does Netflix do creative personalisation on the Homepage? (You can find Case Studies Here)

Every case study you internalise becomes a mental model you can pull out in a conversation, a strategy discussion, or an interview.

The PMs who sound the sharpest in rooms are the ones with the deepest library of real-world references.

Weeks 6 and 7. Generative AI From First Principles.

Now you are ready for Gen AI. Not before.

Week 6 is about understanding generative AI, how it is different from the AI that we learnt in the last few weeks. How does Generative AI work? What are some of the applications that generative AI can solve?

Then go deeper into the hood of Generative AI

What is a transformer? What is a token? What is a context window?

What happens when you increase the temperature from 0.2 to 0.9?

Why does the same prompt give different outputs each time?

Why does the model hallucinate, and when is hallucination more likely?

These are not academic questions. If your AI feature is hallucinating and you do not know whether the problem is the prompt, the temperature, the model, or the retrieval layer, you cannot diagnose it.

You are dependent on an engineer to figure it out. That is a loss of ownership.

Week 7 is prompting. Not “write me a blog post” prompting. Structural prompting.

Chain of Thought. Tree of Thought. Few-shot examples.

System-level constraints. Prompt chaining for multi-step workflows.

These techniques are the difference between an AI feature that works 60% of the time and one that works 95% of the time.

If you are building a product used by millions of people, that 35% gap is the difference between a feature users trust and a feature users abandon.

By the end of Week 7, you should be able to write a multi-step prompt chain that produces consistent, reliable output for a defined product use case. Not a toy demo. A real workflow.

Weeks 8 and 9. Prototyping. The Phase That Changes Your Career.

Everything before this was understandable. This is where you start building.

The gap between PMs who understand AI and PMs who build with AI is the largest salary gap in product management right now. The difference is not 10% or 20%. It is 2x to 3x.

Week 8: build your first working prototype.

Not a mockup. Not a slide deck. A working thing where the AI takes an input, processes it, and returns an output a real user can interact with.

Use Cursor. Use Replit. Use Claude. The tools are too good now for any PM to say “I cannot build anything.” You do not need to write production code. You need to string together an API, a prompt, and a simple interface.

Pick a real problem you face at work. Build a tool that solves it in an afternoon. When you walk into a meeting and show your team a working prototype instead of a spec, the dynamic changes permanently. You stop being the person who requests things. You become the person who builds things.

Week 9: Make the prototype reliable.

This is where most vibe coding efforts die.

The prototype works 70% of the time. The PM calls it done. Post it on LinkedIn. Gets some likes.

But 70% reliability is not a product. It is a demo.

Week 9 is about model control. Temperature tuning. Choosing between a fast, cheap model and a slow, expensive one for different parts of the workflow.

Building a reliability framework. Understanding the cost-per-query math so you can tell leadership “this feature costs X per user per month” and mean it.

These are PM decisions. Not engineering decisions. The PM who owns these trade-offs owns the product. The PM who delegates them owns a spec.

Weeks 10 and 11. RAG, Agents, and Evals.

If you have done everything above, you are in the top 10% of PMs by AI fluency.

The top 1% knows three more things. RAG systems. AI agents. And evals.

RAG

Almost every enterprise AI product is a RAG system. The reason is simple. GPT does not know your company data. Claude does not know your Q3 metrics. No off-the-shelf model knows your customer support documentation.

RAG bridges this gap. It retrieves relevant chunks from your private data and feeds them to the model so it can generate answers grounded in your specific context.

If you are PMing an enterprise AI product and you cannot explain how RAG works, how chunking affects retrieval quality, or what a vector database does, you cannot debug the most common failure mode: the system returning wrong or irrelevant answers.

Agents

These are AI systems that do not just respond. They act. They plan a multi-step workflow, use external tools, and execute tasks autonomously. The PM challenge is different here. You need to design guardrails, failure states, and human-in-the-loop checkpoints for a system that makes its own decisions.

Now, the most important skill of all three: evals.

Evals are how you measure whether your AI system is good.

This sounds simple. It is the hardest unsolved problem in AI product management. You cannot use traditional metrics. Pass/fail does not work when the output is a paragraph of text. You need deterministic evals for things you can measure objectively. You need probabilistic evals where you use one AI model to judge another.

The PMs who understand evals ship with confidence. They set measurable quality bars. They can defend their decisions to leadership with data.

We have covered Advanced Evals here

Weeks 12 and 13. Interview Preparation & Portfolio

You can know all of the above. If you cannot communicate it under pressure in 45 minutes, none of it counts.

AI PM interviews do not ask you to design an alarm clock for the blind. They ask questions like these:

How would you measure the success of GPT 5.0?

Design a reliability framework for an AI shopping assistant.

ChatGPT’s regeneration rate has increased. How would you investigate?

How would you price Gemini?

Design a RAG system for TikTok content moderation.

Imagine Google made its model free, and it is better than paid GPT. You are Sam Altman. What do you do?

If you have never practised these questions, you will fumble.

Not because you do not know the concepts. Because you have not built the muscle of structuring an AI PM answer under time pressure.

The structure matters.

Start by clarifying the AI system architecture.

Define success metrics specific to AI products.

Address trade-offs unique to probabilistic systems. Show cost-per-query awareness.

Show eval thinking. Demonstrate that you can move between product sense and technical depth in the same answer.

Week 12 is practice. Answer questions out loud. Record yourself. Listen back. Find the moments when you hedged, when you went vague, when you lost the technical thread.

Week 13 is portfolio.

Document the prototype you built in Week 8.

Write up two case study analyses from Week 5.

Create a one-page eval framework for an AI feature.

This is your proof of work. It is the difference between “I learned about AI” and “I built with AI.”

This plan will make you absolutely beast after 12–13 weeks. 95% of PMs cannot do these things right now. The ones who can are not smarter. They just did the work in the right order. Most of this plan maps directly to what I teach. If you want to skip the self-study phase??

Check our highest-rated AI PM course (Including AI PM Interview Preparation )· 4.9/5 · 600+ enrollments · Use NYE26 for 60% off → See testimonials and course details

About Author

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. For more, check out my Live Webinars, AI Product Management Course, PM Interview Mastery Course, Cracking Strategy, and other Resources

This is a great resource!

Really appreciate that you start with how AI systems actually works. One thing I’d love your take on: where in this 90‑day path would you go deep on cost and latency trade‑offs (model choice, routing, caching)? That’s becoming a core skill for any serious AI PM.