AI Evals - Part 1

Step 1 is creating Golden Data Set

Part 1: We created a Golden Dataset

Part 2: Deterministic Evals

Part 3: LLM as Judge

Part 4: Problem of Retrieval and Generation

Right now everyone is talking about Vibe Coding.

You open Cursor, you type a prompt, and the code just works. It is great for building a prototype over the weekend.

But here is the problem.

Vibe Coding is terrible for Product Management.

When you are building a feature for millions of users on an app like DoorDash or Zomato, you cannot rely on vibes. You cannot just chat with your bot, see three good answers, and say Okay, ship it, we are good to go.

That is how you get fired.

Because the moment you go to production, a user is going to ask for Non-spicy food for my 3-year-old, and your vibes-based AI is going to recommend a Chettinad Chicken/Salad because it saw the word Chicken/Salad.

So today, we are going to stop doing Vibe Checks and start building a Golden Dataset. Evals are useless if you don't have a solid "Ground Truth" to compare against.

For this entire Series, imagine we are Product Managers at a food delivery company. Let’s call it Foodie.

We are building a new AI feature: The Healthy Cravings

The problem statement is simple: You tell the AI what diet you are on, and it finds the exact dish from the thousands of restaurants in your area.

The Vibe Check Failure

You (the PM) open the testing window and type:

Input: I want a high protein salad

AI Reply: Here is a Grilled Chicken Salad from EatFit.

You: Approved

Two days later, a real user types:

Input: I want a high protein dinner under ₹200, but no salads please.

AI Reply: Here is a Grilled Chicken Salad from EatFit.

The user specifically said No salads. But your AI ignored the negative constraint because it latched onto high protein.

This is the number one reason AI products fail. They handle the Happy Path well but fail on constraints.

To fix this, we cannot rely on manually chatting for hours hoping to stumble upon these bugs. We need a 'Master Answer Key'—a fixed list of correct answers for every tricky scenario—so we can automatically grade the AI against it. In the industry, this answer key is what we call the Golden Dataset.

Building the Golden Dataset ( Step 1 )

We need to move from manual chatting to an automated test suite. In the industry, we call this the Golden Dataset.

It is basically an Excel sheet or a JSON file that contains three things:

The User Query (Input)

The Context (The menu data available)

The Ground Truth (What the answer should be)

You cannot build this dataset by just thinking of random questions. You need to structure it into three buckets.

Bucket A: The Simple Retrieval (Happy Path)

These are basic queries to check if the system works at all.

Query: Show me Keto friendly options.

Ground Truth: Any dish with the Keto tag in our database.

Bucket B: The Negative Constraints

LLMs are bad at the word Not. You must test this heavily.

Query: I want something healthy but no broccoli.

Ground Truth: A list of healthy dishes where ingredients does NOT contain broccoli.

Bucket C: The Numerical/Reasoning Constraints

This checks if your AI can actually do math or logic

Query: Dinner under ₹300 with at least 20g protein.

Ground Truth: Filter database for price < 300 AND protein > 20

Hands-On Practice: Creating your test_cases.jsonl

We are going to do this technically. We don’t use Excel for this because we want to run code on it later. We use a format called JSONL (JSON Lines).

Here is what your file golden_dataset.jsonl should actually look like.

{"id": 1, "query": "Find me a spicy burger", "constraints": ["spicy", "burger"], "expected_ids": [101, 105, 209]}

{"id": 2, "query": "I want dinner under 250 rs, no veg", "constraints": ["<250", "non-veg"], "expected_ids": [304, 308]}

{"id": 3, "query": "Suggest a cheat meal", "constraints": ["high-calorie", "junk"], "expected_ids": [501, 502]}Why expected_ids? Notice I didn’t write the text answer in the “Expected” field. I wrote the Dish IDs.

This is crucial. In a Retrieval (RAG) system, the first thing we test is NOT , did the AI write a nice description about the burger? The first thing we test is Did the AI actually find the burger in the database?

If your AI writes a beautiful description of a burger that doesn’t exist in the database, that is a hallucination.

The Metric: Retrieval Recall

Before we judge the AI’s personality, we measure Retrieval Recall.

It is a simple math formula:

Recall = (Relevant Items Found) / (Total Relevant Items Needed)

If the user asked for Burgers under ₹200 and there are 10 such burgers in your database, but your AI only found 5 of them, your Recall is 50%.

If your Recall is low, no Prompt Engineering will fix it. The AI cannot recommend a dish it cannot find.

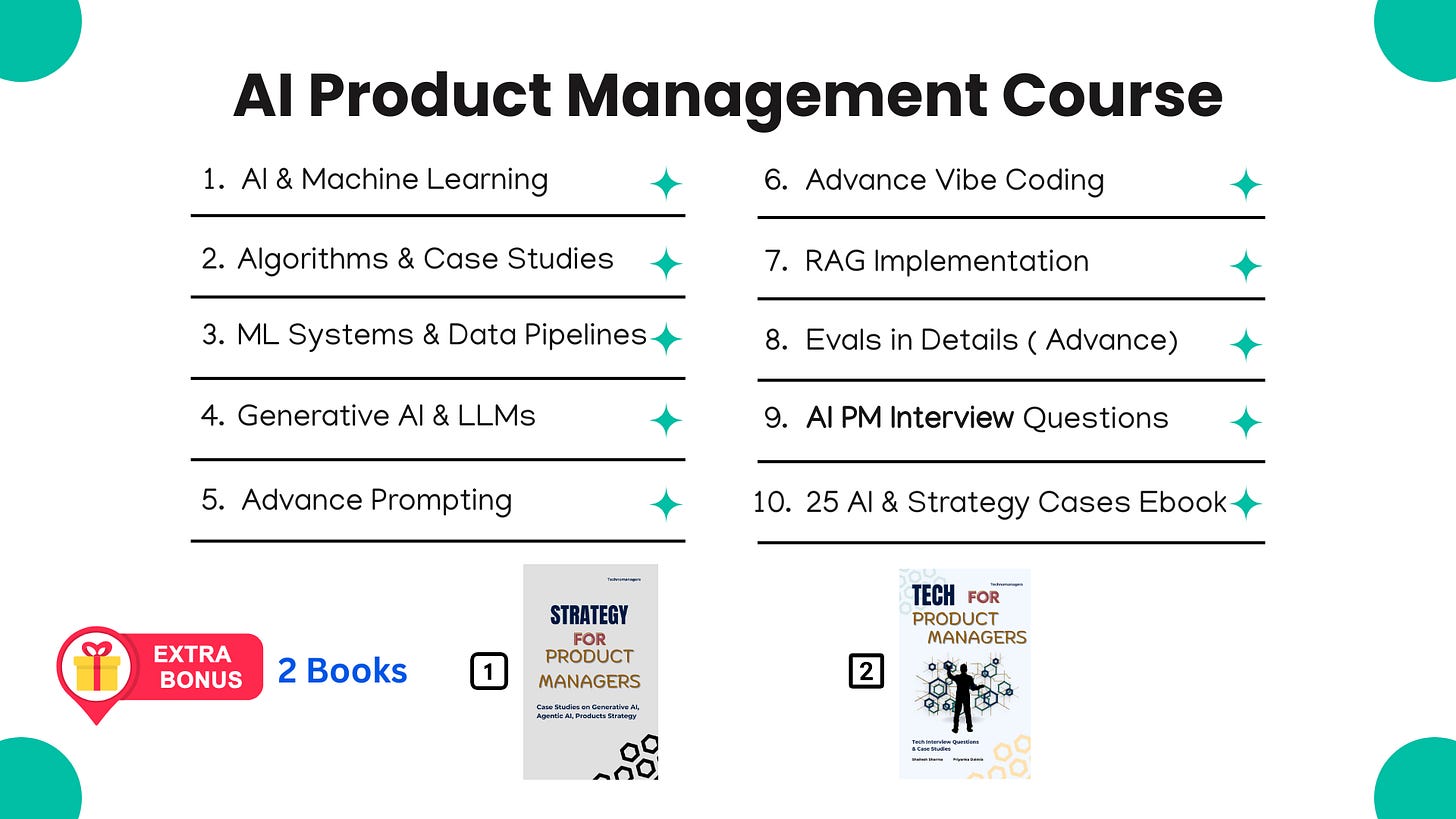

If you like this article, you will absolutely love our Course ( having real AI PM Interview Questions ( Details Below )

Most Detailed AI Product Management Course ( Along with AI PM Interview Questions )

Homework for today

Your task for today is simple: Open a notepad and write down 10 queries for your product.

3 Simple ones.

3 “Negative” ones (No X, Without Y).

4 “Reasoning” ones (Cheaper than X, Better than Y).

In the next lecture, we will stop using our eyes to check the answers.

We will write a small Python script to check the Prices and Calories automatically. Because if the AI says a pizza is low calorie, we want our code to catch that lie instantly.

See you in Lecture 2.

For New Year, we are giving EXTRA 60% OFF on our AI PM Flagship Course for very limited Time

Coupon Code — NYE26 , Course Link - Click Here

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. For more, check out my AI Product Management Course, PM Interview Mastery Course, Cracking Strategy, and other Resources

This is a great articulation of why vibes break down at scale. Vibe coding is fantastic for exploration, but not for product decisions.

Especially liked how you frame this as a PM problem, not just a model one.