AI Evals - Part 2

Mastering Deterministic Evals

This is part 2 of our AI Evals Series. Read the Part 1 Here

In the last lecture, we built our Golden Dataset. We have our Answer Key to grade the answers

Here is a mistake I see a lot of Product Managers make. They treat the LLM like a super-human that can do everything. They feed the user query to GPT-4, get an answer, and then ask GPT-4 blindly again to check if the answer is correct.

Do not do this, don’t always rely on the LLM as Judge

There are two types of Judging

Probabilistic - This is what everyone does. You send your AI’s answer to GPT-4 and ask: “Is this a good answer?”

Pros: It understands nuance (tone, politeness, helpfulness).

Cons: It is slow, expensive, and it makes mistakes.

When to use: Only for subjective things like “Was this explanation easy to understand?”

Deterministic

Pros: It is free, instant, and 100% accurate.

Cons: It cannot judge “tone.”

When to use: For facts, numbers, and hard constraints.

Simple concept: Use Python for Math, use LLMs for Vibes. Because LLM Can be a confident Liar.

Let’s understand with the help of an example. Let’s go back to our Foodie app.

User Query: I have ₹500. I want a chicken burger.

The LLM says: I recommend the Spicy Chicken Burger from Burger King. It is a great deal at ₹350!

The user clicks order. The cart updates. The price is actually ₹550.

The user cancels the order and deletes your app.

Why did this happen? Because the LLM hallucinated the price to fit the user’s prompt. It wanted to be helpful, so it lied about the price to make the user happy.

We need to catch this before the user sees it. let’s see how can we do this.

That’s where we will discuss today the Deterministic Evals specially for Maths using Python

Step 1: Force the Structure to JSON

You cannot automate testing if your AI output is just a blob of text like “Here is a burger...” because parsing the text will have it’s own challenges

You must force your engineering team to make the AI output JSON.

Bad Output (Text):

“The Maharaja Mac is a great option. It has 600 calories and costs 240 rupees.”

Good Output (JSON):

JSON

{

"dish_name": "Maharaja Mac",

"calories": 600,

"price": 240,

"currency": "INR",

"is_vegetarian": false

}

Once you have JSON, you don’t need AI to check the answer. You can just use code. You can always parse the data and get the information that is required for you.

Step 2: The Unit Tests

For our food delivery app, we need three specific metrics for today.

1. JSON Validity Rate

This is the Did it break? test.

Sometimes, the AI forgets a comma or adds extra text. If the output isn’t valid JSON, our app crashes.

Goal: 99.9% Pass Rate.

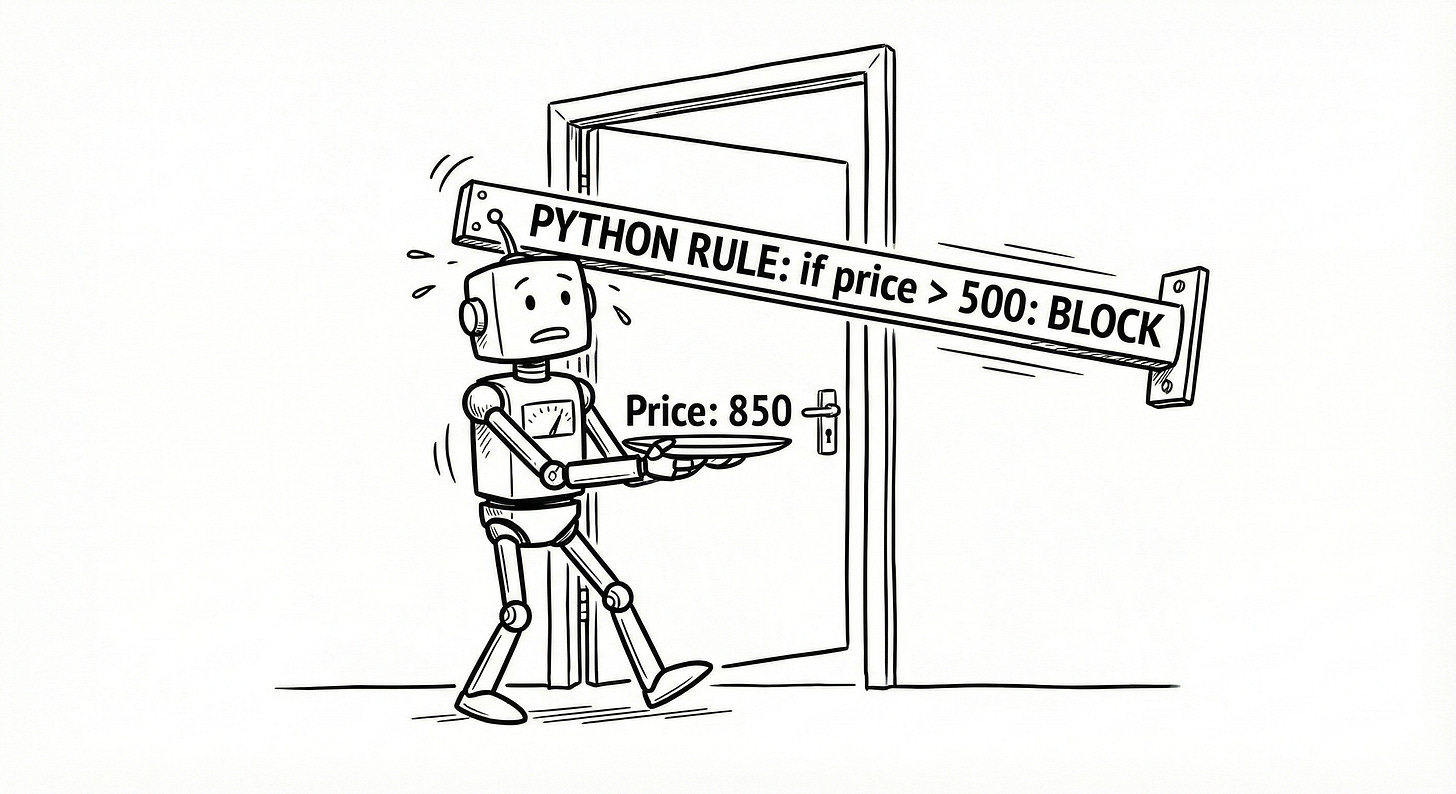

2. Constraint Satisfaction Rate

This is the big one. Did the AI actually follow the rules we set?

Scenario: User said “Under ₹500”.

The Test: We write a simple

ifstatement. if response[’price’] <= 500: return PASS else FAIL

This is deterministic. It is binary. There is no maybe. It either followed the instruction or it didn’t.

3. Hallucination Rate (Inventory Check)

This is where we check if the item is real.

The Test: Take the dish_name from the AI response and query our SQL database.

Logic: SELECT * FROM menu WHERE name = ‘Maharaja Mac’

If the database returns 0 results, the AI made up a fake burger.

Hands-On Script

Here is what the actual Python evaluation script looks like. You don’t need to be a coder to understand the logic here.

Python

import json

# This comes from our Golden Dataset (Lecture 1)

user_limit = 500

# This comes from the AI Model

ai_response_str = '{"dish_name": "Truffle Burger", "price": 850}'

def evaluate_price_compliance(response_str, limit):

try:

# Step 1: Check JSON Validity

data = json.loads(response_str)

# Step 2: Check the Math (Constraint Satisfaction)

if data['price'] <= limit:

return "PASS: Within Budget"

else:

return f"FAIL: Price {data['price']} exceeds limit {limit}"

except json.JSONDecodeError:

return "FAIL: AI did not output valid JSON"

# Run the test

print(evaluate_price_compliance(ai_response_str, user_limit))

Output: FAIL: Price 850 exceeds limit 500

See? We didn’t need GPT-4 to tell us the AI failed. A simple 10-line script caught the error instantly.

Summary & Homework

Today was about the “Hard Skills” of testing—Math, Logic, and Structure.

If you are building an AI product, ask your developers this question tomorrow: Are we parsing the AI response as JSON, and do we have automated checks for numerical limits?

If the answer is No, you are not ready for production.

But...

What if the AI suggests a burger that is ₹200 (Passes the price test), and exists in the database (Passes the inventory test), but the description says: This burger is made of wet cardboard and sadness.?

The code will say PASS. But the user experience is terrible.

Code can check Math. It cannot check Quality.

In the next lecture, we will bring the LLMs back. We will talk about LLM-as-a-Judge and how to automate the Vibe Check to ensure your AI is helpful.

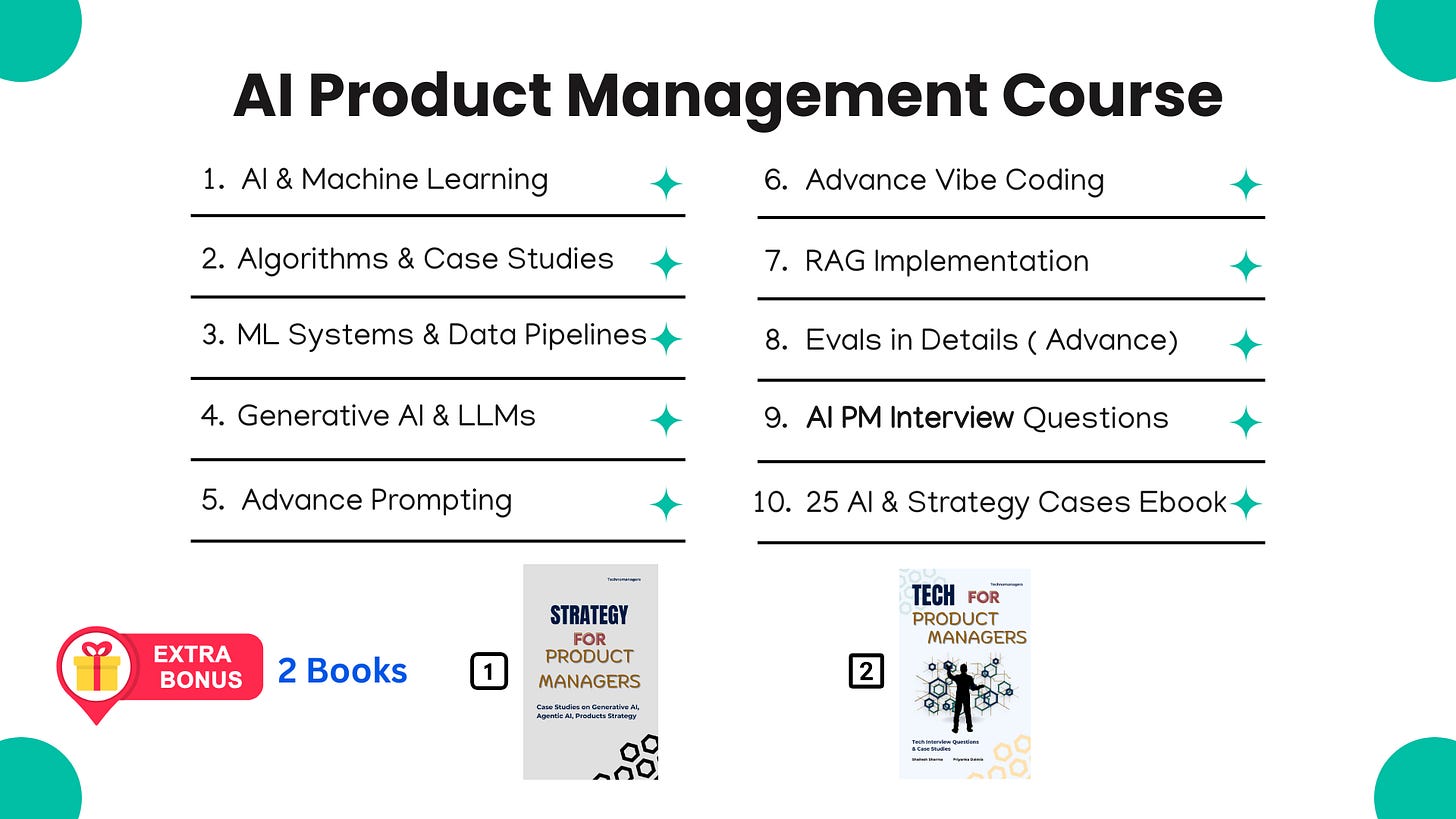

If you like this article, you will absolutely love our Course ( having real AI PM Interview Questions ( Details Below )

Most Detailed AI Product Management Course ( Along with AI PM Interview Questions )

For New Year, we are giving EXTRA 60% OFF on our AI PM Flagship Course for very limited Time

Coupon Code — NYE26 , Course Link - Click Here

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. For more, check out my AI Product Management Course, PM Interview Mastery Course, Cracking Strategy, and other Resources