AI Evals - Part 3

Mastering LLM as Judge

In part 2, we implemented Deterministic Evals. We built a Hard Gate using Python code to ensure our AI never hallucinates a price, violates a JSON schema, or ignores a numerical constraint.

If an AI response fails these tests, it is blocked immediately.

However, passing the Hard Gate only proves that your product isn’t broken. It does not prove that your product is good.

A response can be factually correct (Passes Code) but can have bad user experience

Today, we focus on Probabilistic Evals, often called LLM-as-a-Judge.

This is how we scale the evaluation of subjective qualities like tone, empathy, and persuasiveness—metrics that Python code cannot measure.

The Limitation of Code

Let’s look at our Food Delivery use case.

User Query: I had a really long day. I need something comforting and warm.

AI Response A:

{”dish”: “Dal Khichdi”, “price”: 180, “calories”: 400}

Message: “The Dal Khichdi is available for 180 rupees.”

AI Response B:

{”dish”: “Dal Khichdi”, “price”: 180, “calories”: 400}

Message: “I hear you. Long days are tough! How about a steaming bowl of Dal Khichdi? It’s simple, buttery, and very comforting—like a warm hug.”

The Analysis:

Deterministic Eval: Both responses PASS. The price is correct in both.

User Experience: Response A is robotic. Response B converts the sale.

To measure the difference between A and B automatically, we cannot use IF statements. We need a system that understands language nuance.

The Solution: LLM-as-a-Judge

Since we cannot review thousands of logs manually, we hire a model to grade another model. We treat the Judge as a simulator for a human reviewer.

Step 1: The Rubric

The most common mistake when setting up an AI Judge is ambiguity.

If you ask GPT-4 - Is this response good?, it will default to being polite and give high scores.

You must provide a strict Rubric.

Example Rubric for Appetising Tone:

Score 1 (Robotic): Factual listing of ingredients only. No adjectives. Cold tone.

Score 3 (Average): Uses generic descriptors like good or tasty. Grammatically correct but uninspired.

Score 5 (Persuasive): Uses sensory language (e.g., crispy, aromatic, zesty). Explicitly connects the food features to the user’s stated need (e.g., comforting for a long day).

Step 2: Chain-of-Thought Grading

We use a technique called G-Eval (Generative Evaluation). We never ask the model for a score immediately. If you ask for a number first, the model hallucinates the score based on probability.

We force the model to output a Reasoning Chain first.

The Prompt Structure:

Review the AI response based on the rubric above. First, write a step-by-step critique explaining which criteria were met and which were missed. Then, and only then, output the final score.

The Hands-On Implementation

Here is the Python structure for an Automated Judge.

import openai

def run_llm_judge(user_query, ai_response):

judge_prompt = f"""

You are a Senior Content Editor for a food delivery app.

User Input: "{user_query}"

AI Response: "{ai_response}"

Rubric for 'Empathy':

- 1: Ignores user's mood.

- 3: Acknowledges mood but generic recommendation.

- 5: Acknowledges mood warmly and tailors the pitch.

Task:

1. Analyze the response against the rubric.

2. Provide a 'Reasoning' paragraph.

3. Output a final integer 'Score'.

"""

# Call the expensive model (GPT-4o)

evaluation = openai.ChatCompletion.create(

model="gpt-4o",

messages=[{"role": "system", "content": judge_prompt}]

)

return evaluation.choices[0].message.content

# Example Run

result = run_llm_judge("I had a bad day", "Here is a salad.")

print(result)

Typical Output:

Reasoning: The user expressed negative emotion (bad day). The AI ignored this completely and offered a salad, which is generally not considered comfort food. The tone was abrupt. Score: 1

Operational Strategy: Batch vs. Real-Time

Do not run this in real-time. Running GPT-4o to judge every single chat response will double your latency and triple your costs.

The Best Practice: Run this as an Offline Batch Job.

Log all chats during the day.

At night, sample 100 random chats.

Run the Judge script on this sample.

Review the Average Empathy Score on a dashboard the next morning.

This gives you the metric you need (Quality Tracking) without hurting the user experience (Latency).

In Chapter 4, we will tackle RAG Accuracy to solve the Confident Liar problem—where the AI gives a polite, structured, but factually wrong answer. You will learn the RAG Triad (Faithfulness, Context Precision, and Answer Relevance) to pinpoint whether your error lies in the search engine (retrieval) or the LLM (generation).

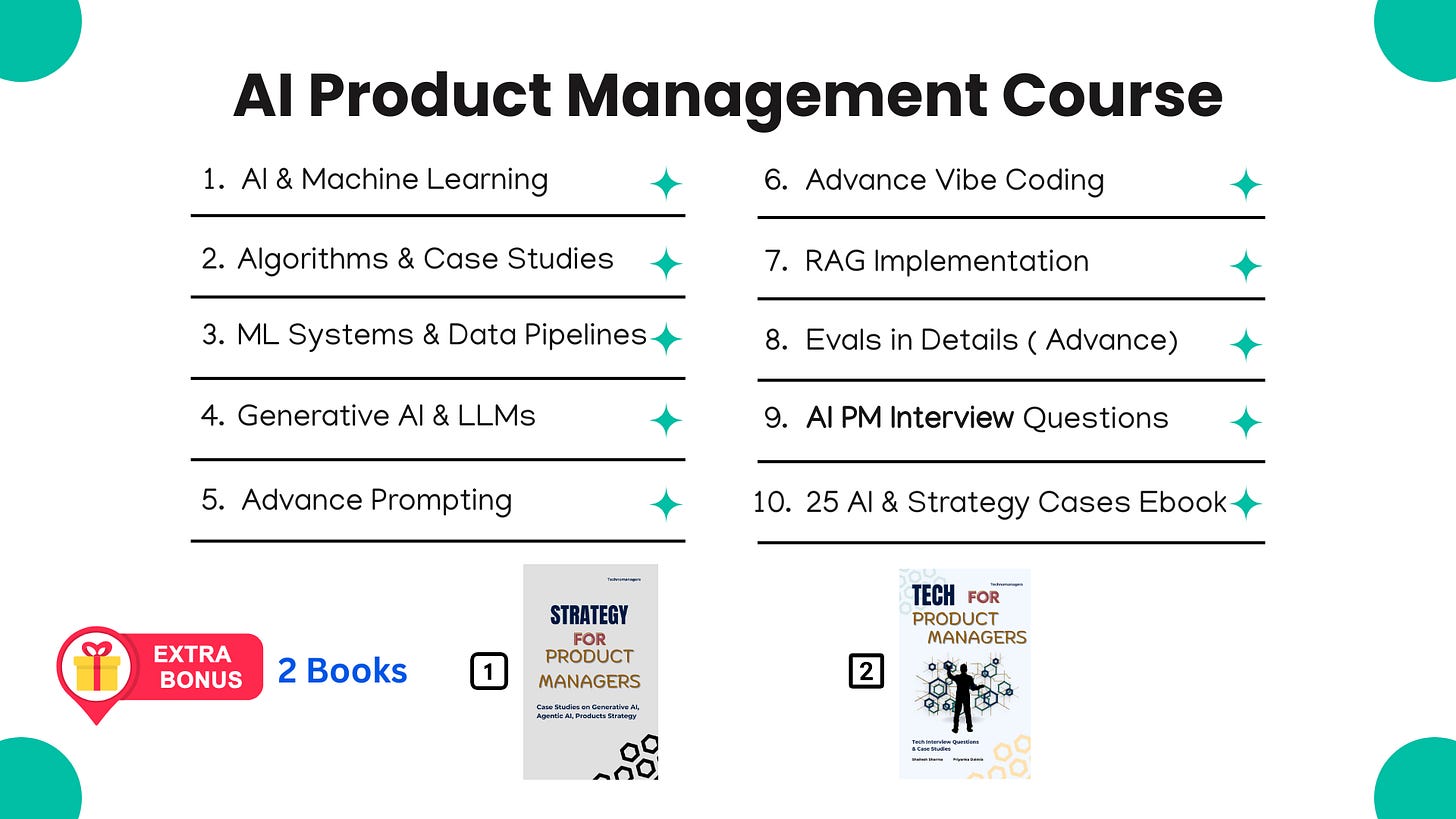

If you like this article, you will absolutely love our Course ( having real AI PM Interview Questions ( Details Below )

Most Detailed AI Product Management Course ( Along with AI PM Interview Questions )

For New Year, we are giving EXTRA 60% OFF on our AI PM Flagship Course for very limited Time

Coupon Code — NYE26 , Course Link - Click Here

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. For more, check out my AI Product Management Course, PM Interview Mastery Course, Cracking Strategy, and other Resources