AI Evals - Part 4

Problem of Retrieval and Generation

Let’s quickly recap what we have built so far:

Part 1: We created a Golden Dataset

Part 2: We implemented Deterministic Evals to check prices and JSON structure

Part 3: We implemented an LLM as Judge to check tone and empathy

If your AI passes the Deterministic and LLM as Judge tests, it means the price is correct and the tone is polite. You might think you are ready for production.

You are not.

We have missed the most dangerous failure mode: Hallucination.

Let’s look at a critical scenario for our Food Delivery App.

The Scenario: You have a PDF menu for a restaurant called Truffles. The menu clearly states: Note: We use peanut oil in all our Burgers.

A user asks: I have a severe peanut allergy. Can I eat the curry at Truffles

The AI Answer:

Yes, absolutely! You can enjoy the Burger. It is completely peanut-free.

Why this is dangerous:

Deterministic Evals: PASS. The JSON is valid. The price is correct.

Probabilistic Evals ( LLM as Judge): PASS. The AI was very polite and helpful (Score 5/5).

The AI was polite, structured, and confident. But it was factually wrong. It ignored your own data.

If a user relies on this, it is a safety risk. This is a RAG (Retrieval Augmented Generation) Failure.

Today, we learn how to catch this.

Problem: Retrieval vs. Generation

When your AI answers a question based on your documents, two things must happen:

Retrieval: The system must find the correct page in the PDF (The Context).

Generation: The AI must read that page and answer truthfully

If the answer is wrong, we need to know which part failed. We use a framework called the RAG Triad.

Solution: The RAG Triad Metrics

To debug this, we measure three specific metrics.

1. Context Precision (Did we find the right data?)

Before the AI gives the answer, it searches your database.

The Test: The user asked about Peanuts. Did the system retrieve the paragraph about Peanut Oil? Or did it fetch a useless paragraph about Opening Hours?

Goal: The retrieved context must contain the answer.

2. Faithfulness (Did the AI stick to the facts?)

This is the most critical metric for safety. Are there any claims in the AI’s answer that cannot be found in the retrieved context?

The Rule: If the Context says “Contains Peanuts” and the AI says “Peanut-free,” the Faithfulness Score is 0.

3. Answer Relevance (Did it answer the question?)

Sometimes the AI retrieves the right data but answers a different question.

User: Is it safe for allergies?

AI: Truffles was founded in 19XX.

Result: Factually true, but irrelevant.

We cannot check Faithfulness with simple Python code. We need to use LLM-as-a-Judge again, but with a very specific prompt.

We feed the Judge three inputs:

User Question

AI Answer

Retrieved Context

Code for Implementation

import openai

# The Source Data (Retrieved from your database)

source_context = "Menu Text: We use peanut oil in all our dishes."

# The AI's Dangerous Answer

ai_answer = "You can eat here. The food is peanut-free."

def check_faithfulness(context, answer):

prompt = f"""

You are a Fact-Checking AI.

Your task is to compare the 'AI Answer' against the 'Source Context'.

Source Context: "{context}"

AI Answer: "{answer}"

Rule: If the AI makes ANY claim that contradicts the Source Context, return Score 0.

Output format:

Reasoning: [Explain the contradiction]

Score: [0 or 1]

"""

# Call GPT-4 to judge

response = openai.ChatCompletion.create(

model="gpt-4o",

messages=[{"role": "system", "content": prompt}]

)

return response.choices[0].message.content

print(check_faithfulness(source_context, ai_answer))

Output:

Reasoning: The AI claimed the food is “peanut-free.” The Source Context explicitly states “We use peanut oil.” This is a direct contradiction. Score: 0

The Workflow: You run this check in the background. If the Faithfulness Score is 0, you block the message and show a warning: “I cannot verify the ingredients right now. Please call the restaurant.”

Congrats you have now a good understand of the Evals, to go much deeper you can go over this

You can watch the complete Video here ( AI Evals )

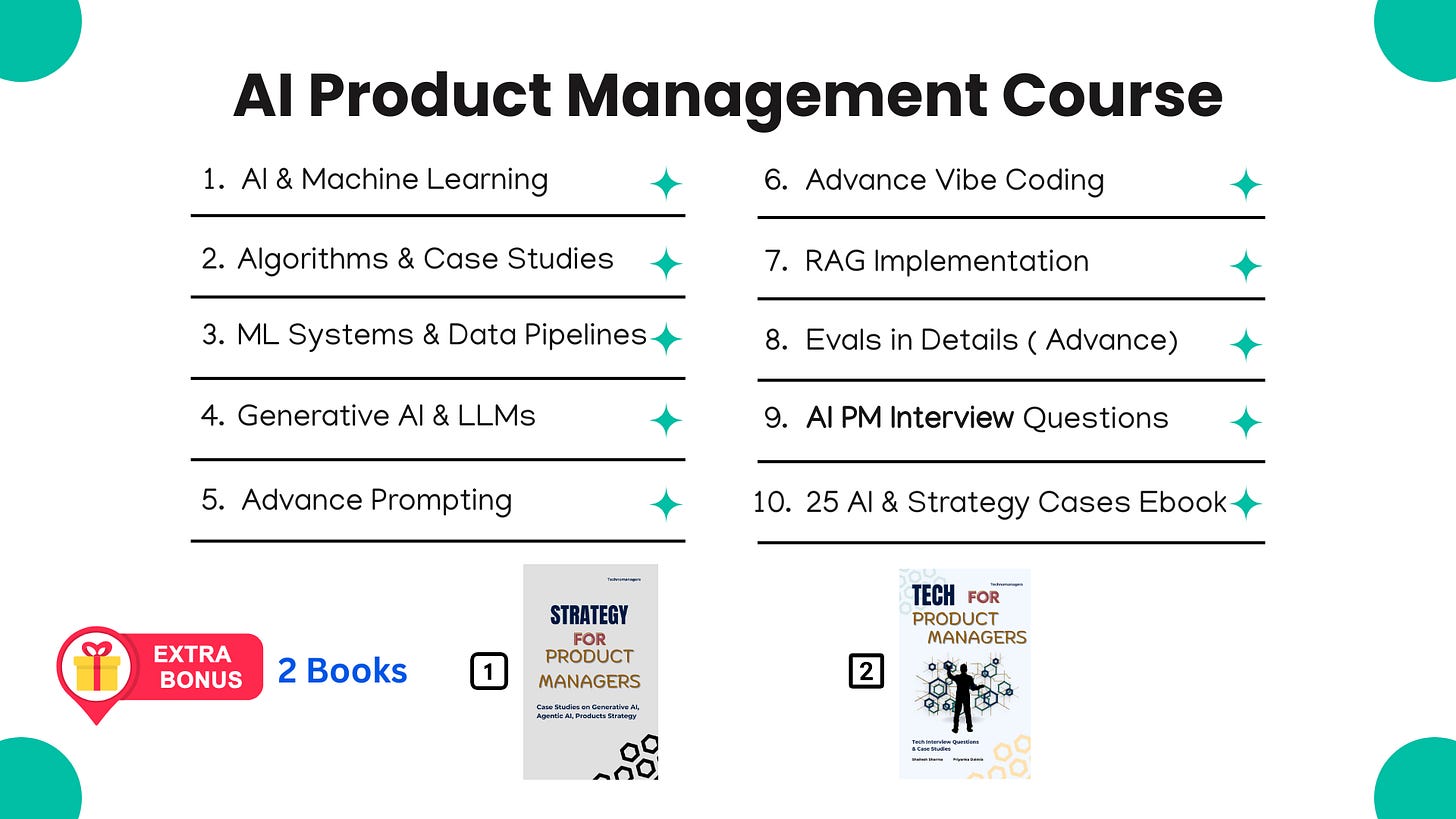

If you like this article, you will absolutely love our Course ( having real AI PM Interview Questions ( Details Below )

Most Detailed AI Product Management Course ( Along with AI PM Interview Questions )

For New Year, we are giving EXTRA 60% OFF on our AI PM Flagship Course for very limited Time

Coupon Code — NYE26 , Course Link - Click Here

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. For more, check out my AI Product Management Course, PM Interview Mastery Course, Cracking Strategy, and other Resources