AI Product Builder Roadmap 2026

The only Roadmap you will need

The workflow nobody taught you.

I built technomanagers.in in one day.

Full product. Frontend, backend, course catalogue, question bank, deployment. One day.

Link — https://technomanagers.in/

I am not an engineer. I am a product manager.

This is what a building looks like in 2026.

The tools have changed so fundamentally that the gap between idea and shipped product is no longer months. It is days. Sometimes hours.

In 2026, the PM who gets hired is the one who builds. Not manages. Builds.

This article walks through the full pipeline.

Problem discovery to launch. Every step uses tools that did not exist 18 months ago.

If you are a PM, engineer, or builder trying to ship an AI product this year, this is the roadmap.

Step 1. Spot the problem. Validate it fast.

Most AI products fail at step zero. They start with a technology and go looking for a problem. We should build something with RAG. We should make an agent. Backwards.

Start with the problem. But how you validate has changed.

Pick a domain you understand. Not AI for healthcare. Something specific.

Radiologists spend 40 minutes per scan writing structured reports. Observable. Measurable. Worth solving.

Now, validate it in 30 minutes. Open Claude. Run a research session.

Sample prompt:

I am validating a product idea. The problem is that radiologists spend 40 minutes per scan writing structured reports. I need you to do the following. First, find 5 existing products that solve this problem today. Second, find evidence from radiology forums or medical publications that this pain point is real and widespread. Third, estimate the addressable market size using publicly available data on the number of radiologists and scans per year. Fourth, list three reasons this problem might not be worth solving.

That last line matters. You are not looking for confirmation. You are stress-testing the hypothesis.

The output of this step is a one-page problem brief in markdown.

Four sections.

→Problem statement.

→ Evidence it exists.

→ Who has it?

→ What they do today.

If you cannot fill that page with real evidence, move on.

Step 2. Competitor research with agents

This is where the 2026 flow diverges most from the old one.

Traditional competitor research meant 15 browser tabs, free trial signups, and a comparison spreadsheet built over two days.

Now you build a lightweight agent that does the data collection in hours.

Sample prompt for Claude with web search:

I am building an AI product for radiology report generation. Research the following competitors: Nuance DAX, Rad AI, DeepScribe, and Ambra Health. For each one extract the following. Target customer segment. Core AI capability. Pricing model if publicly available. Key differentiator. Weaknesses mentioned in user reviews. Present this as a structured comparison table.

The agent does not replace your analysis. It replaces the manual collection. You still look at the output and ask the hard questions.

Where are the gaps? Where are competitors overserving? Where is there a segment nobody is building for?

Save the output as competitor-matrix.md. It lives alongside your problem brief. Both are markdown. Both feed into the next step.

If you want to learn how to build these agent workflows from scratch, the Technomanagers AI PM course covers everything. 800+ students. 4.9 out of 5 rating.

Step 3. Talk to users. AI does not replace this.

You have a validated problem and a competitor landscape. Now talk to actual humans.

No agent replaces a 30-minute conversation with someone who has the problem you are solving. But how you prepare and synthesise has changed.

Before the call, generate a targeted research script.

Sample prompt:

Here is my problem brief [paste problem-brief.md]. Here is my competitor matrix [paste competitor-matrix.md]. Generate 10 user interview questions that specifically probe the gaps I identified in the competitor landscape. Focus on workflow pain points, current workarounds, and willingness to pay. Avoid generic questions.

After five conversations, paste all your notes into Claude.

Sample prompt:

Here are my notes from 5 user interviews about radiology report generation [paste notes]. Extract the following. Top 3 recurring pain points ranked by frequency. Contradictions between what users say they want and what they actually do. Unmet needs that no current competitor addresses. Willingness to pay signals.

The key discipline is triangulation. User interviews say one thing. Competitor gaps say another. Usage data says a third. The truth is in the overlap.

Step 4. Write the spec in markdown

This step separates the 2026 builder from the traditional PM.

You do not write a 20-page PRD in Google Docs. You write a product spec in markdown. In Claude.

A markdown spec is machine-readable. It can be fed directly into Claude or Cursor to generate functional code. A Google Doc cannot.

The format of your spec determines the speed of your prototype. This is an architectural decision, not a style preference.

The spec has five sections.

Section 1. Problem and user.

One paragraph pulled from your problem brief.Section 2. Core workflow.

The 3 to 5 steps the user takes to get value. Not features. Steps. User uploads a scan. System extracts findings. The system generates a structured report. User reviews and edits. System learns from edits.Section 3. Technical architecture.

What model. What retrieval strategy? Input and output formats. Where the data lives. If you cannot write this section, you do not understand your own product.Section 4. Eval criteria.

How will you know if it works? Precision. Recall. Latency. Hallucination rate. Defined before you build.Section 5. Out of scope.

What you are deliberately not building in v1. This section saves more time than any other.

Save it as product-spec.md.

Step 5. Build the prototype in Claude

Take your product-spec.md. Open Claude. Paste the entire spec.

Sample prompt:

Here is my product spec [paste product-spec.md]. Build a functional prototype of this application. Use React for the frontend. Use Python with FastAPI for the backend. For the RAG component, use a vector database with cosine similarity search. Generate the full codebase, including file structure, all components, API endpoints, and database schema. Make it deployable.

Claude generates a working application from a well-written spec. Not a mockup. Not a wireframe. A working product.

The quality of the prototype is a direct function of the quality of your spec. Vague spec, vague output. Precise spec, precise output.

This is where the .md format pays off.

Then you iterate.

Sample follow-up prompts:

Change the retrieval to a hybrid search combining keyword and semantic matching.

Add error handling for cases where the API returns empty results or the context window is exceeded.

Refactor the output to match this JSON schema [paste schema].

Each iteration takes minutes. Within a few hours, you have something you can put in front of users. Not a deck. A working product.

Step 6. Build evals before you launch

Most AI products ship without evals. Single biggest mistake in AI product development.

Evals answer one question. Is the AI actually working?

For a RAG product, your metrics are Precision at K, Recall at K, and MRR.

For generative output, hallucination rate, relevance, and coherence.

For an agent, the task completion rate, step accuracy, and cost per task.

Define before launch. Automate. Set thresholds below which the product does not ship.

Sample prompt:

I have a RAG-based radiology report generator. Generate a Python evaluation script that tests the following. Precision at 5 for retrieved context chunks. Recall at 5 for relevant medical findings. Mean Reciprocal Rank for the top result. Hallucination detection by comparing generated text against source documents. Use a test set of 20 sample queries with known correct answers that I will provide.

This separates a demo from a product.

Step 7. Production hardening

Prototype works. Evals pass. Now harden it.

Latency

If your pipeline takes 8 seconds, users leave. Target under 2 seconds. Optimise chunking, cache frequent queries, and pick the right model size.

Cost

Every API call costs money. A prototype at 2 dollars per session is a burn rate, not a product. Find where smaller models work, where you can cache, and where you can batch.

Error handling

What happens when the model returns garbage? When retrieval finds nothing. When the API goes down, every failure mode needs a graceful fallback.

Monitoring. Log inputs, outputs, latencies, costs. Build dashboards that catch quality degradation before users notice.

Can you actually do all of this?

Each step is learnable. Each step uses tools available right now.

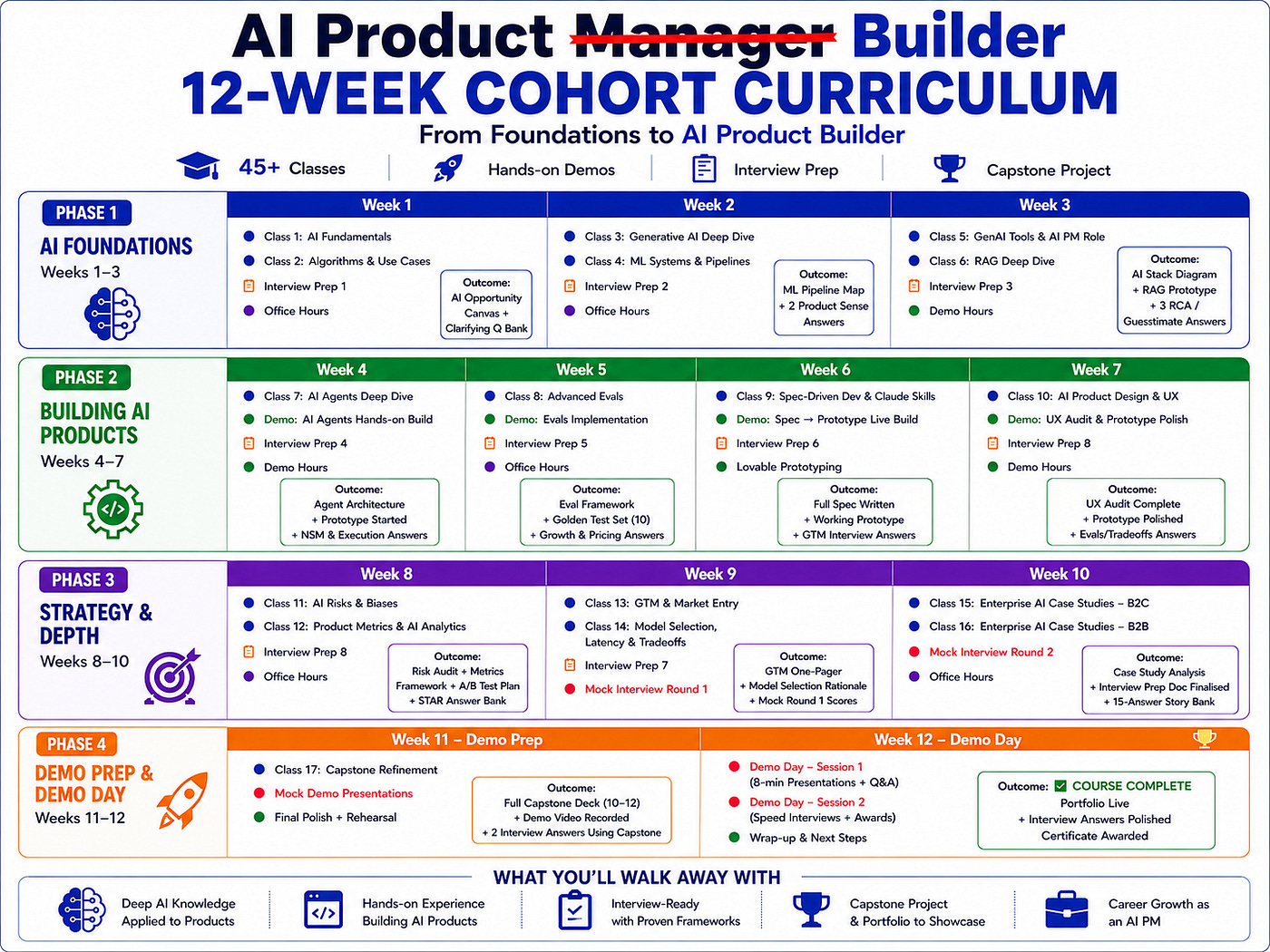

If you want to go from reading to shipping, the AI Product Builder Cohort is built for exactly this pipeline.

If you prefer self-paced, the Technomanagers AI PM course covers every concept here. 800+students. 4.9 rating. It is the foundation the cohort builds on → See testimonials and course details

About Author

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. Weekly Live Webinars/MasterClass ( Here )