How Gen AI Works? AI for Product Managers

Be the FullStack Product Manager

In the last lecture, we learned about What Generative AI is, and in this lecture, we will learn how generative AI works.

To give you a 10-second recap, Generative AI is the type of AI that learns the training data's underlying pattern and generates new data that can be Text, Audio, Video, Code, etc.

But now the biggest question is how it can do that.

The concept lies in Language modeling.

What is Language Modeling?

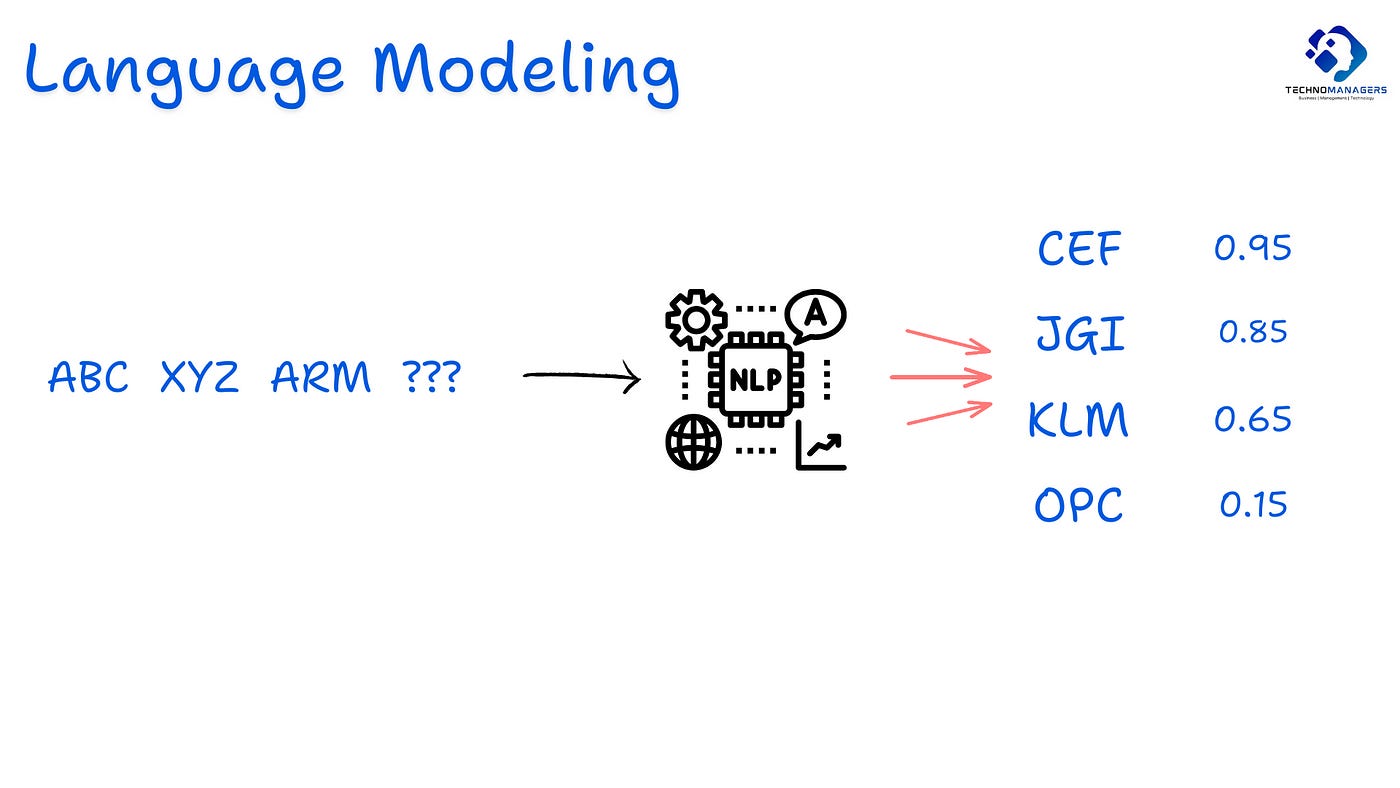

Language Modeling is the Core Task for predicting the next word in a sequence of Words. It’s a core Concept of NLP ( Natural Language Processing)

It’s via language Models. Language models are trained over large data and predict the likelihood or the probability of different words that can come next.

Let’s understand this with the help of an example -

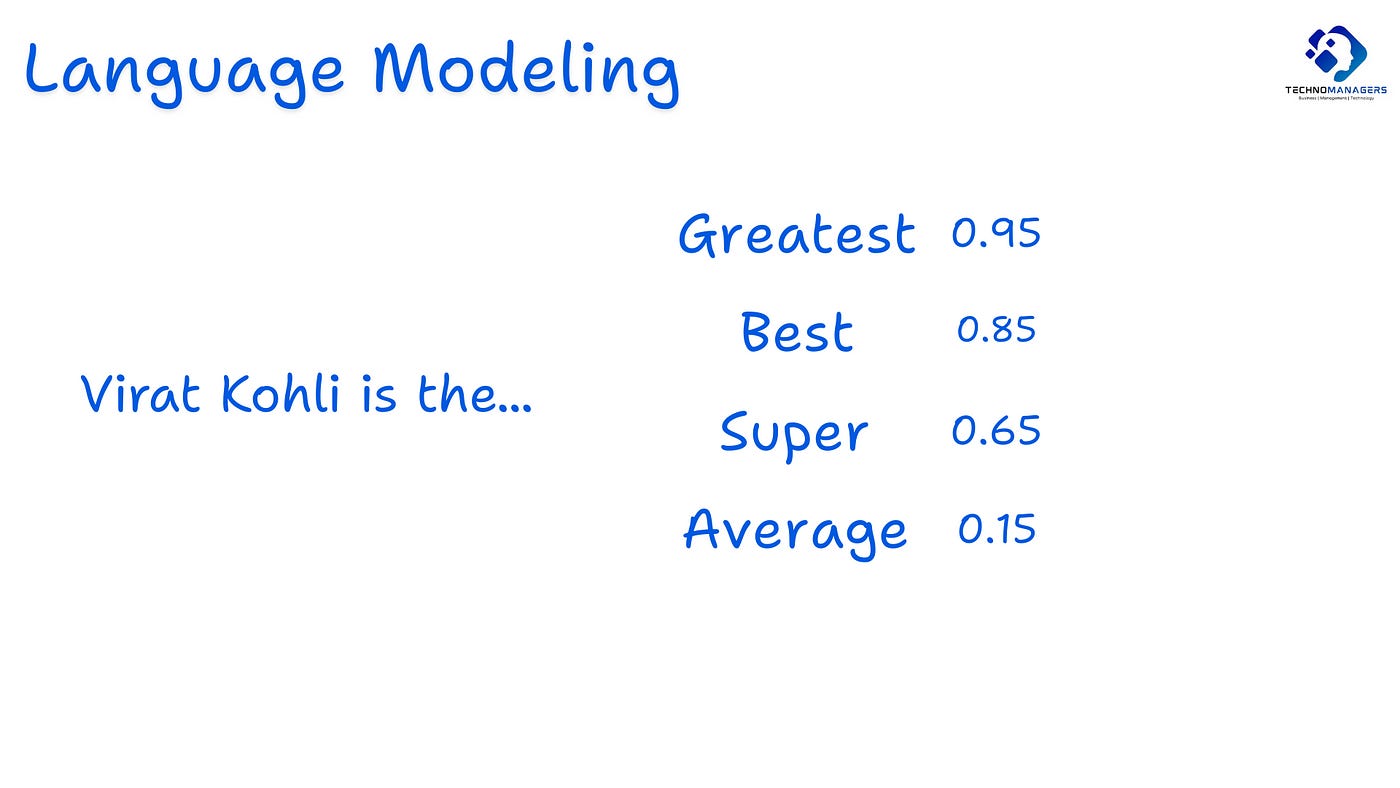

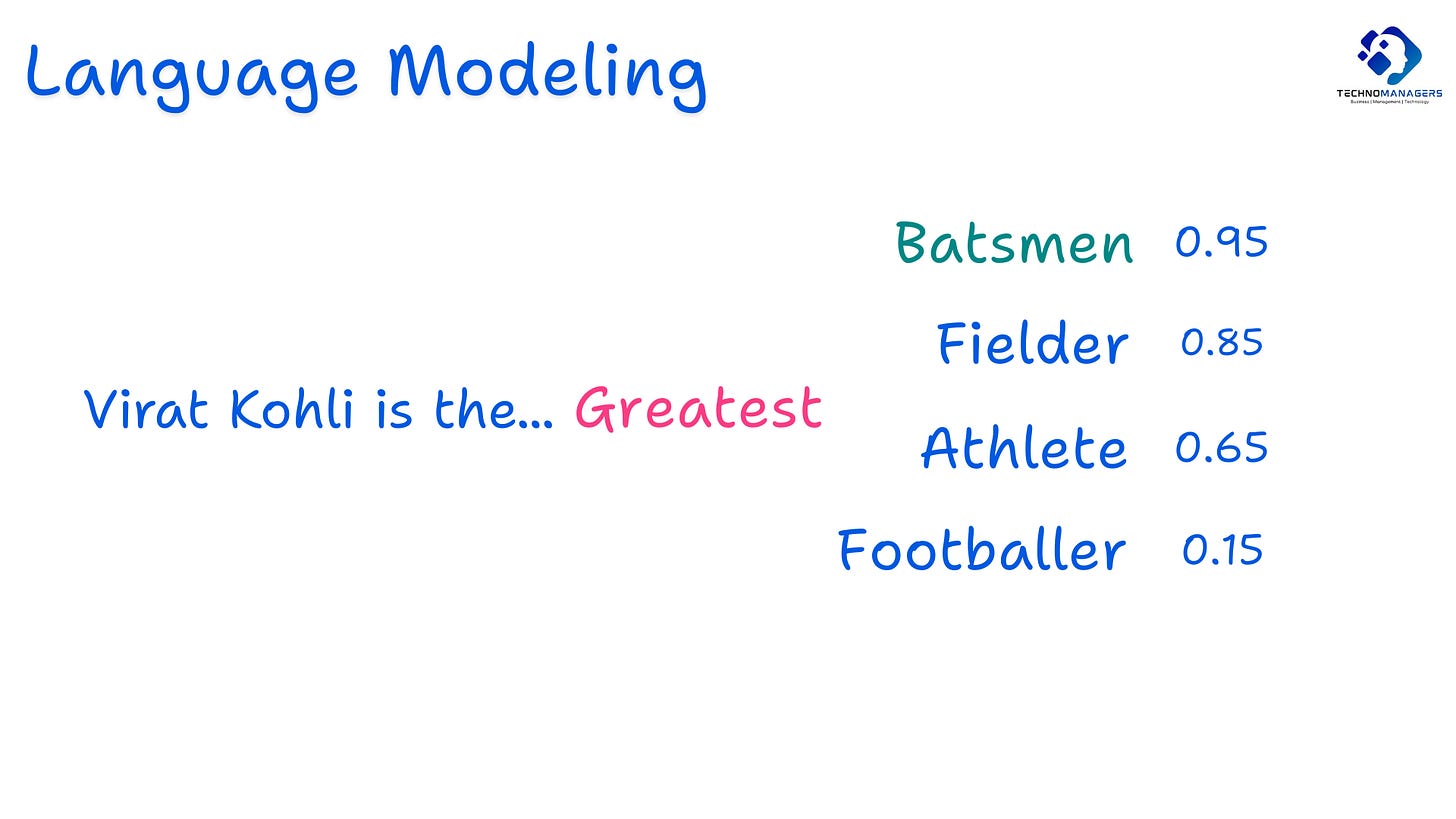

The input text is — Virat Kohli is the …

What the language model will do is to predict a bunch of words which can next along with the probability of occurrence.

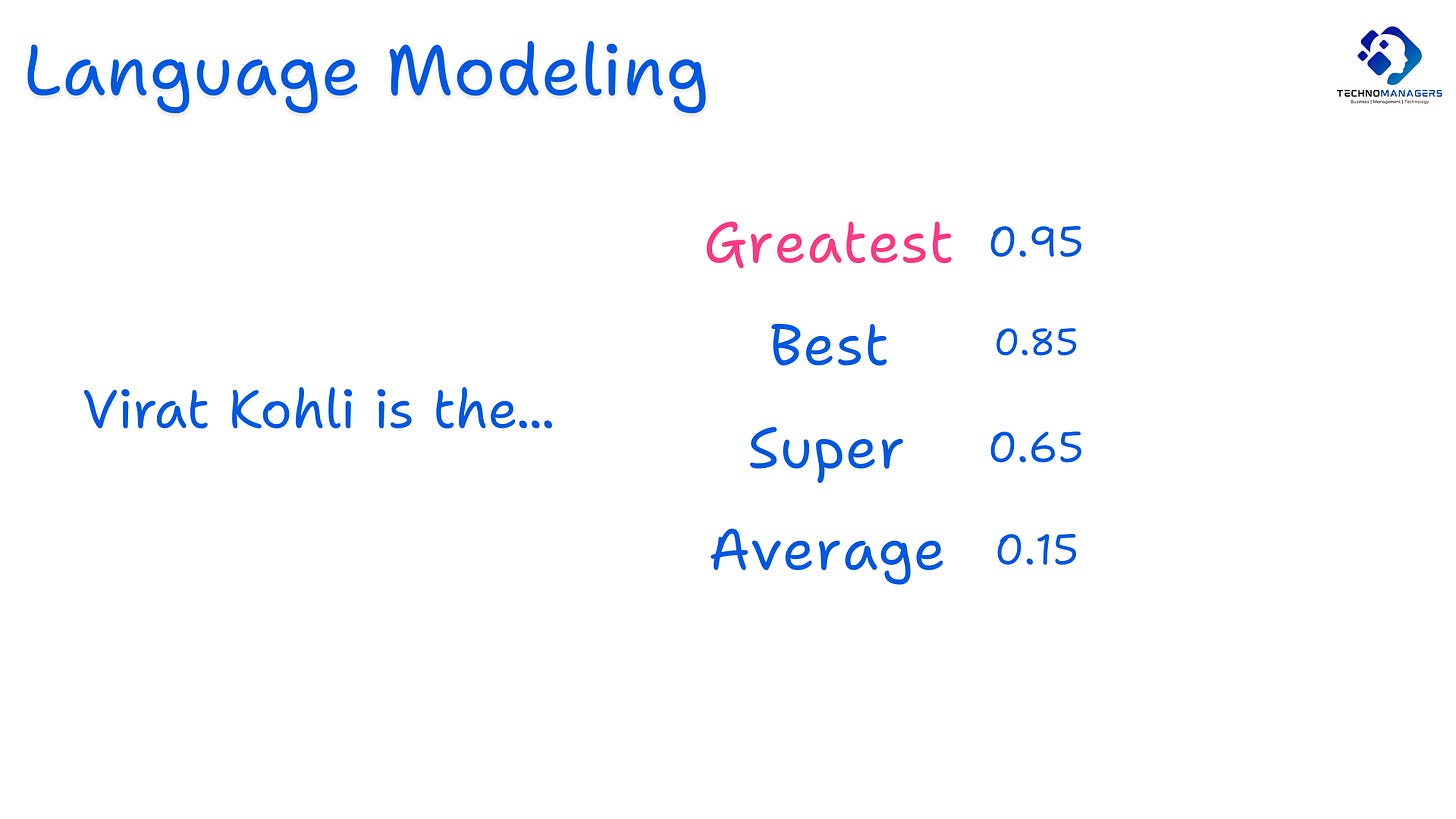

Let’s assume that, in this case, the language model predicts Greatest with a Probability of 0.95, Best with a probability of 0.85, Super with a probability of 0.65 and Average with a probability of 0.15.

While training this language model, it counts the occurrence of Greatest and Virat Kohli in a sentence together and gives the probability. This it does for all the words.

Here, Greatest has the highest probability, so we have chosen Greatest -

Again, the Combined output ( that is, Virat Kohli is the Greatest ) will feed into the language model; this time, the model predicts Batsmen with a probability of 0.95, Fielder with a probability of 0.85, Athlete with a probability of 0.65 and footballer with a probability of 0.15.

Here, the probability of the batsmen is the highest.

So the next word is Batsmen.

Imagine you repeat this 1000 times, and you will get a complete paragraph about Virat Kohli correct? In the same way, the ChatGPT also give you output?

I hope you can get this. ChatGPT is just a repetition of this task multiple times to give you a paragraph.

You might say that, Shailesh, this is not new; this was already there for a long.

Like Google Autocomplete, Siri, the voice agent or if you have checked the word suggestions in the Mobile keyboard while chatting with someone.

So what has changed? — If you have this question, you are on the right track

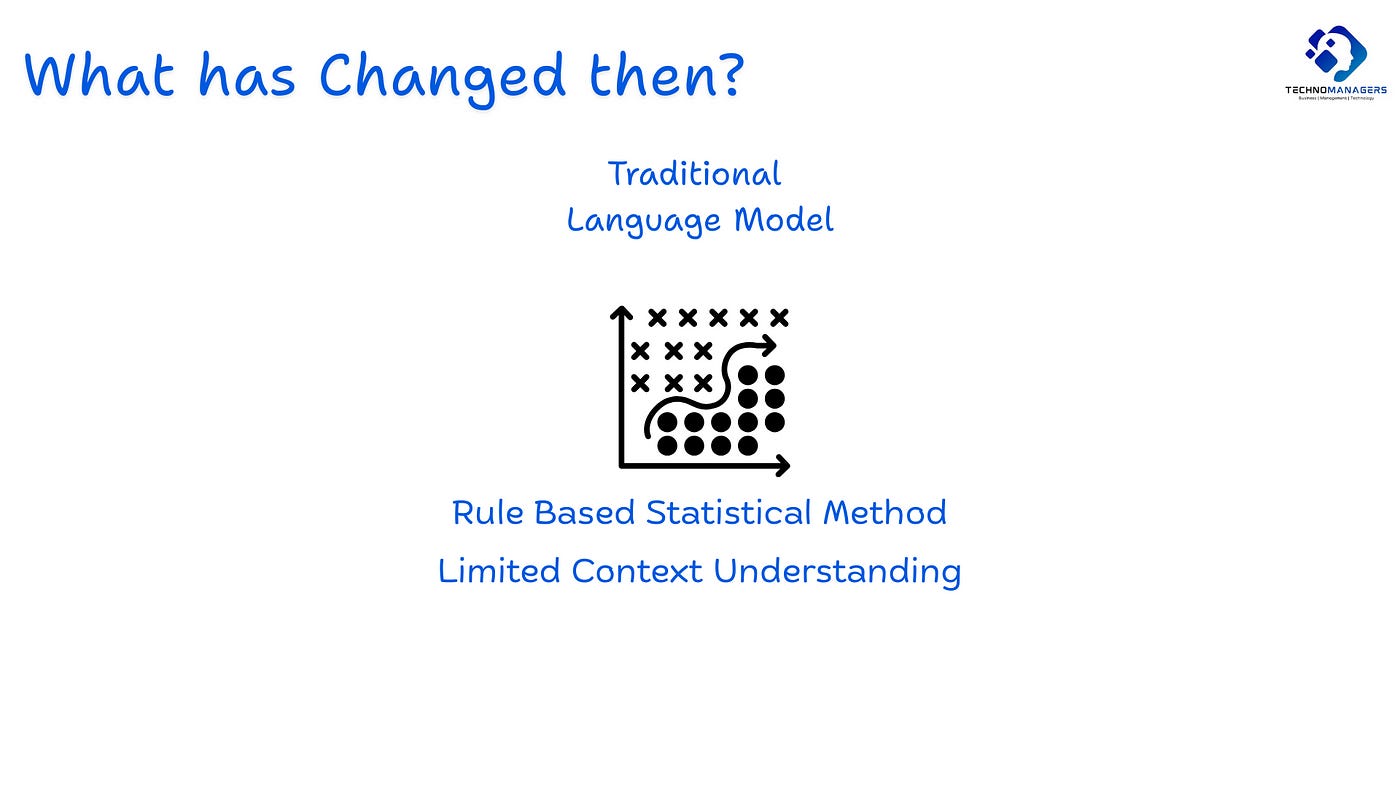

All of these were based on a traditional Language model where we count the occurrence of words together in a sentence and based on that we give the prediction.

These is a fundamental problem in that — What’s that problem?

The Problem is that while doing this, we don;t retain the context. These models predict the next word based on the probability of sequences of n words appearing together in the training data. They have a limited understanding of the entire context and conversation. They just predict it.

They don’t truly understand the meaning of the sentences together — that’s why you might have seen some garbage suggestions from the keyboard while chatting.

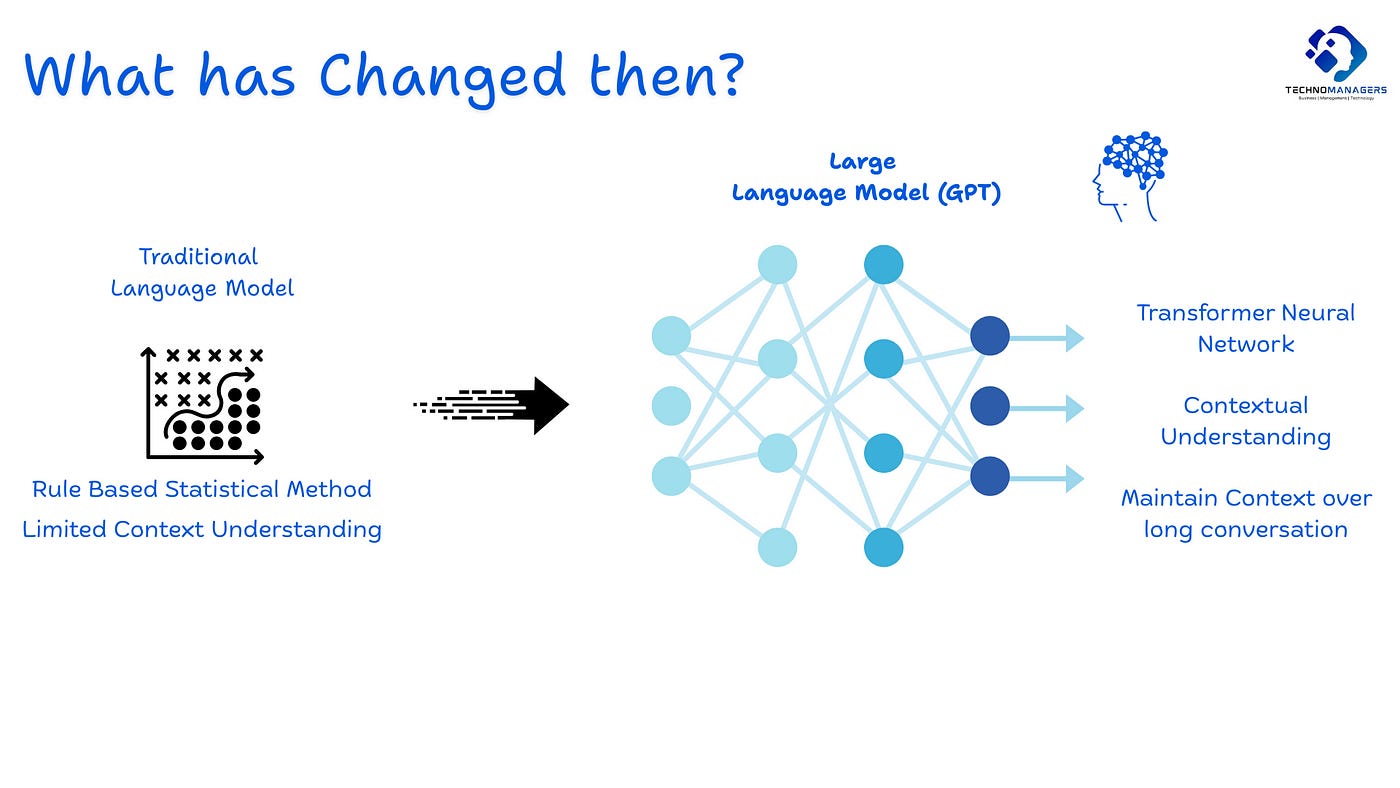

That’s where we moved to a more advanced Large Language Model ( which mimics the human brain-like capability with the help of Neural Networks ). These are based on the transformer architecture, which we will learn about in future lectures. Now, just assume that this helps retain the context over long conversations and can understand the complex and contextual nature of Human language.

How Model Training Happens?

The first step is to train the model via unsupervised learning, where the model learns the fundamental patterns and structures of language from the vast amount of unlabeled data.

The Data can be Text, Images, Videos, Codes, etc ect

This is the initial and most computationally intensive phase.

SO, how we do it is — Imagine we have a complete paragraph, we just mask out some of the words and ask the model to predict it. Based on that, the model parameters are turned.

After the first step, the model has a general understanding of language

This second step helps the model to perform specific downstream tasks.

Like we train the model about Summarisation, like we feed the model with some text and its summaries.

Basically, in this step, we show them how to summarize, how to translate, how to do sentiment analysis, etc.

Till now, the model knows the basic concept of language and understands tasks.

The final step is to train them from human feedback.

This is used to fine-tune Large Language Models (LLMs) to better align with human preferences and instructions.

Human evaluators rank or rate the generated responses based on factors like helpfulness, accuracy, coherence, and harmlessness.

Basically the third step acts like a feedback loop that teaches the LLM what humans consider to be good responses, making it more aligned with human values and preferences.

Now your Model is ready, it knows the text, it knows summarisation, it knows the coding, etc. It also knows what a bear looks like, what spectacles look like or How Cheesecake looks like?

Now, if you ask the Model Via Prompt like “Create an Image of a Bear having spectacles and eating Cheesecake”

It will be able to do that.

I hope you have gotten a good sense of How Gen AI works?

Don’t miss out on the new way of cracking PM Interviews

You have to think about the First principle thinking and you can crack the PM interview like the top 1% of candidates

This has helped more than 3000+ candidates get their Dream Job

Resources to Download

Strategy for Product Managers, Leaders, Everyone ( Download Here )

PM Interview Mastery Course ( Crack Interview like top 1% ) — First Principle Thinking ( 10 Video lectures )

Technomanagers

More about PM Interview questions and Mock Interviews | YouTube | Website | Tech & Strategy Newsletter

To understand such Tech Concepts and Machine Learning Case Studies. Download our Tech for Product Manager having Concepts and Case Studies about Artifical Intelligence