How Session-Based RNNs Predict Your Next Swipe in TikTok?

TikTok’s Rabbit Hole?

There is a comfortable lie in Product Management.

If you have enough historical data on a user, you know exactly what they want. You build your collaborative filtering models. You map out their lifetime preferences. You assume your algorithm is bulletproof.

Then you look at TikTok.

And you realise historical data is often a trap.

If your AI relies on what a user did yesterday, it fails to understand what they crave right now. In platforms where intent shifts by the minute, historical profiling is dead. You need to predict the immediate future based on the immediate past.

This is the first principle breakdown of how to build the Rabbit Hole effect. We will move from traditional recommendation engines to Session-Based Recommendations using Recurrent Neural Networks.

If you are preparing for AI PM interviews, this is one of the most important system design concepts you can learn. We teach this and many more real interview scenarios in our course.

What is TikTok, Really?

From a product architecture standpoint, TikTok is not a social network. It is an AI-driven bipartite matching engine.

It does not care who your friends are. It does not care what you followed last month. It cares about one thing. Matching an infinite supply of highly fragmented content with highly volatile human attention.

The moment you open the app, you are a blank slate. Not because TikTok does not have your data. But your data from yesterday is almost useless for predicting what you want in the next 30 seconds.

This is already a fundamentally different product philosophy from Instagram or YouTube. Those platforms are built on the social graph. TikTok is built on the interest graph. And the interest graph is rebuilt from scratch every single session.

The Strategic Bet: Kill the Social Graph

This is the part most PMs miss entirely.

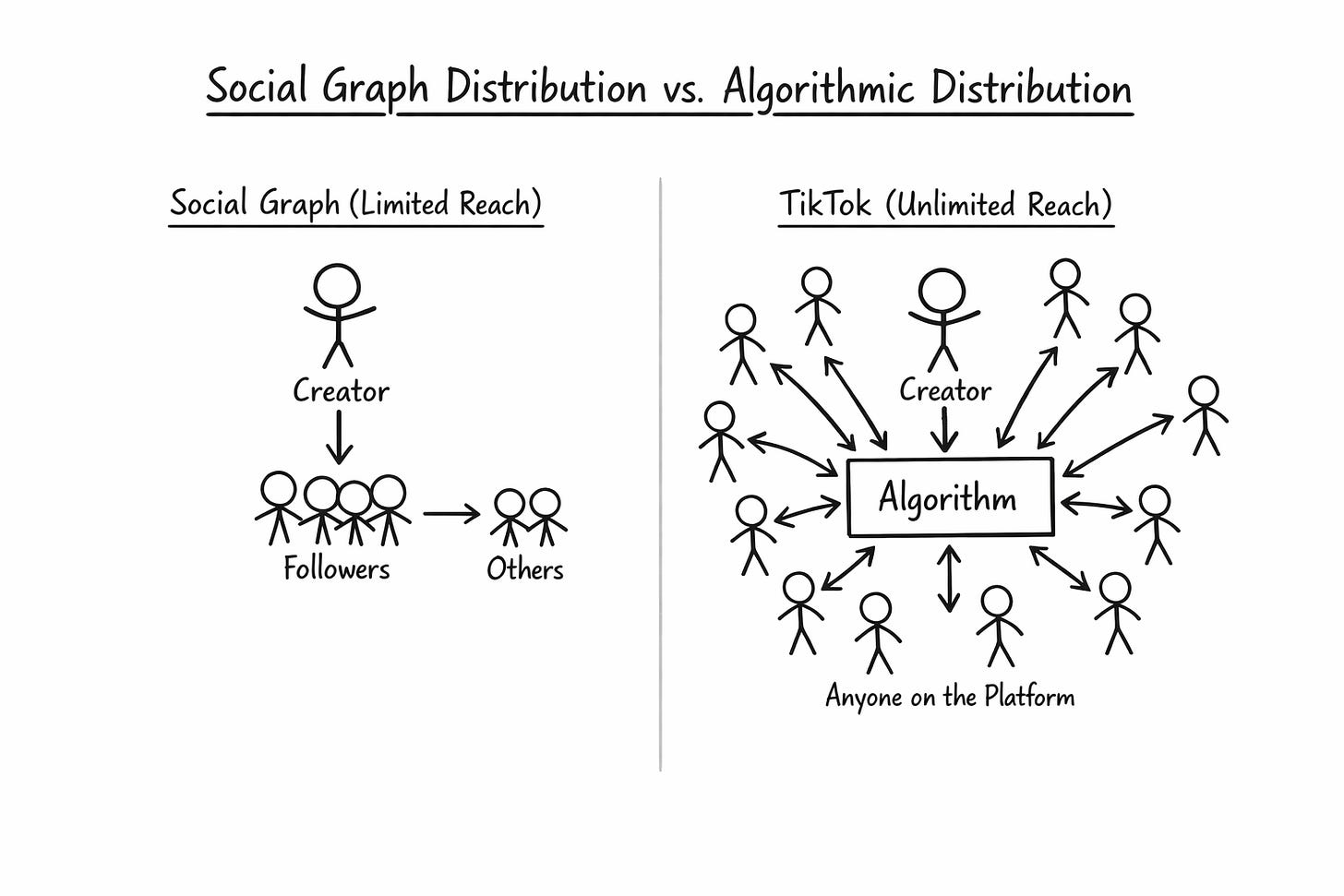

TikTok did not just build a better recommendation engine. It made a strategic decision to remove the social graph as the primary distribution mechanism.

On Instagram, your content reaches your followers first. Then the algorithm decides whether to push it further. On YouTube, subscribers see your videos first. Then the algorithm takes over.

On TikTok, follower count is almost irrelevant for distribution. The algorithm decides who sees what, independent of social connections. A creator with 200 followers can get 10 million views on a single video if the algorithm detects high engagement in the first few hundred impressions.

Why does this matter strategically?

Because it means TikTok does not need network effects to retain users. Traditional social platforms are sticky because your friends are there. You cannot leave Instagram because your social circle is on Instagram. That is a network effect moat.

TikTok replaced network effects with algorithmic effects. You do not stay on TikTok because your friends are there. You stay because the algorithm understands you better than any other platform. The algorithm itself is the moat.

This is why TikTok’s valuation swings wildly depending on whether the recommendation algorithm is included in the deal. Reports from the US sale negotiations showed that TikTok, without its algorithm, could be worth as little as 40 billion dollars. TikTok with its algorithm is worth closer to 200 billion dollars.

The algorithm is not a feature. It is the entire business.

The User Behaviour Problem

On traditional platforms like Netflix or Amazon, a user’s session is slow. They search. They read reviews. They watch a two-hour movie. You have time to understand them.

On TikTok, user behaviour is chaotic.

Users do not explicitly tell you what they like. They signal it through micro-actions. A two-second linger. A rapid swipe. A share. Finishing a 15-second loop twice. These are all implicit signals.

A user might open the app wanting comedy. Three swipes later, they see a video about fixing a sink. Suddenly, their intent shifts entirely to DIY home repair.

And here is the hardest part. Even if a user is logged in, every time they open the app, their current emotional state is essentially a cold start. They might have had a bad day. They might be bored. They might be curious about something they have never explored before.

Historical profiles cannot capture this. Only the current session can.

The Problem Statement

How do we accurately predict and serve the next piece of content to a user when their current intent is unknown, rapidly changing, and largely divorced from their long-term preferences?

If the system relies on long-term data, it will continue to show comedy even after the user has mentally shifted to DIY. The algorithm will feel clunky. Out of touch.

This is not a theoretical problem. This directly hits the business.

The Metrics Framework: Three Layers

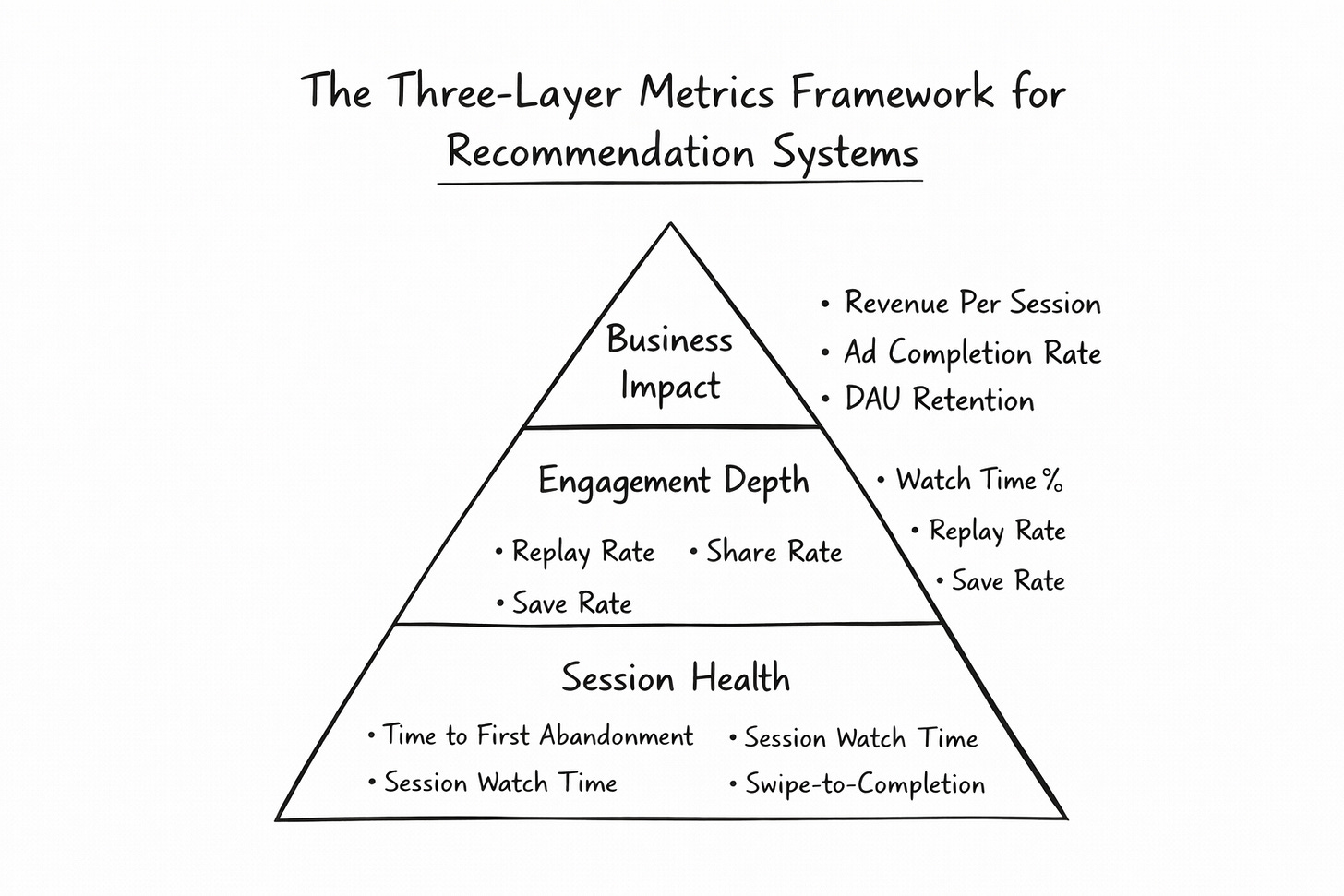

Before we solve it, we must measure the pain. And we need to measure it at three distinct layers. Most PMs only think about one layer. That is a mistake.

Layer 1: Session Health Metrics (Does the user stay?)

Time to First Abandonment tells you how many videos a user swipes through before killing the session. If this number is high, the algorithm is slow to adapt. Think of it as the “cold start tax.” How many bad recommendations does the user tolerate before leaving?

Session Watch Time is the total minutes spent in a continuous app session. TikTok’s average is 95 minutes per day across multiple sessions. If a single session averages less than 8 to 10 minutes, the recommendation engine is leaking users.

Swipe-to-Completion Ratio is the ratio of videos skipped within three seconds versus videos watched to 80% or more completion. A bad ratio means the system is serving the wrong content. A healthy For You feed should have a completion ratio above 40% within the first 10 videos of a session.

Layer 2: Engagement Depth Metrics (Does the user care?)

Not all engagement is equal. TikTok’s algorithm weights signals differently because some signals are more honest than others.

Watch Time Percentage is the strongest signal. A user who watches 95 per cent of a 60-second video has expressed genuine interest. They did not tap a button. They gave you their attention. Attention is the most expensive thing a human can give.

Replay Rate tracks how many users watch a video more than once. This is a signal that the content was not just good but worth revisiting. Replays are weighted heavily because they are almost impossible to fake.

Share Rate is even more telling. A user who shares a video is doing free distribution work for TikTok. They are putting their social capital on the line by recommending content to their friends. This is the highest intent signal after a purchase.

Save Rate means the user wants to come back to this content later. This is a forward-looking intent signal that most platforms underweight.

Comment Sentiment is trickier. A comment can be positive, negative, or neutral. Raw comment counts are misleading. TikTok’s system analyses whether comments indicate genuine engagement or hate-watching. Both drive views, but only positive engagement drives long-term session health.

Layer 3: Business Impact Metrics (Does the algorithm make money?)

Revenue Per Session connects recommendation quality directly to dollars. If the algorithm serves better content, users stay longer, see more ads, and revenue per session increases.

Ad Completion Rate measures whether users watch the ads placed between videos. If the surrounding content is relevant and engaging, users are in a positive attention state and more likely to watch an ad through. If the content is poor, users are already in “skip mode” and will reflexively skip the ad too.

DAU Retention at Day 1, Day 7, and Day 30 tells you whether the recommendation quality is good enough to bring users back. A single great session means nothing if the user does not return tomorrow. This is the ultimate test.

Most PMs stop at Layer 1. The best AI PMs connect all three layers into a single causal chain. Better recommendations lead to better session health, which leads to deeper engagement, which leads to higher ad revenue and retention.

Why Traditional Recommendation Methods Fail Here

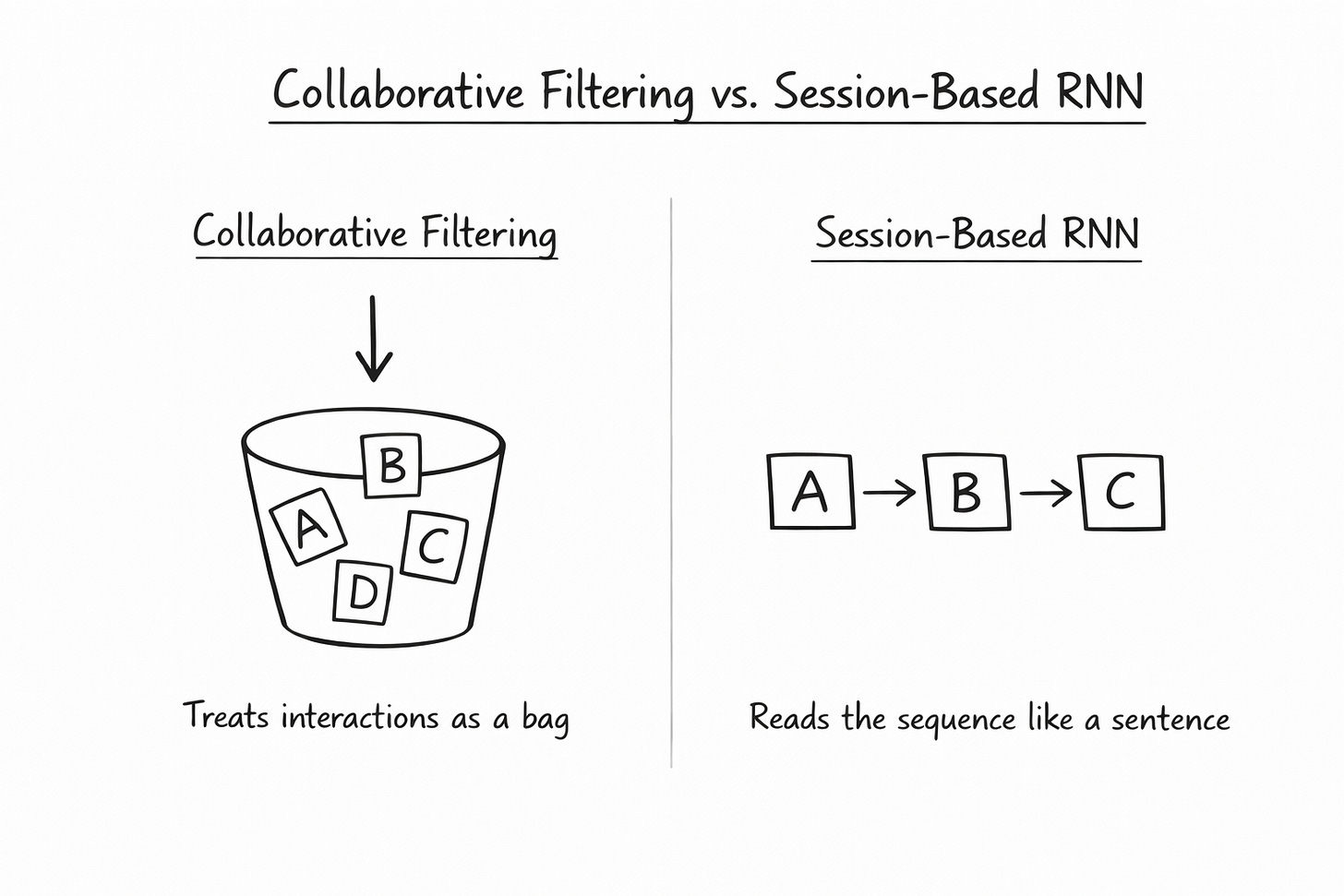

Most PMs are familiar with Matrix Factorisation, also called Collaborative Filtering.

It works like this. Users who liked video A also liked video B. So if you liked A, the system recommends B.

This approach has powered Amazon and Netflix for years. But it fails catastrophically on TikTok.

The reason is simple. It ignores the sequence of actions.

If you watch Video A, then Video B, then Video C, the order in which you watched them contains massive contextual clues about your shifting intent. Matrix Factorisation treats them as a disorganised bucket of likes. It does not know that C came after B. It does not know that the transition from A to B was a signal.

Sequence matters. And for sequence, you need a fundamentally different architecture.

Why RNN is the Way Forward

This is where the paradigm shift happens.

Recurrent Neural Networks, specifically architectures like GRU4Rec (Gated Recurrent Units for Recommendations), are designed exclusively for sequential data.

Think of it this way. An RNN treats a user’s session like a sentence. If you read the words “I want to eat an...” your brain predicts “apple.” An RNN does the same thing with user actions. It looks at the strict chronological sequence of the last 10 swipes and uses that sequential memory to predict the 11th.

This is fundamentally different from collaborative filtering. The RNN does not ask “what did users like you enjoy?” It asks “given the exact order of what you just did, what should come next?”

The PM Requirements Before Any Code is Written

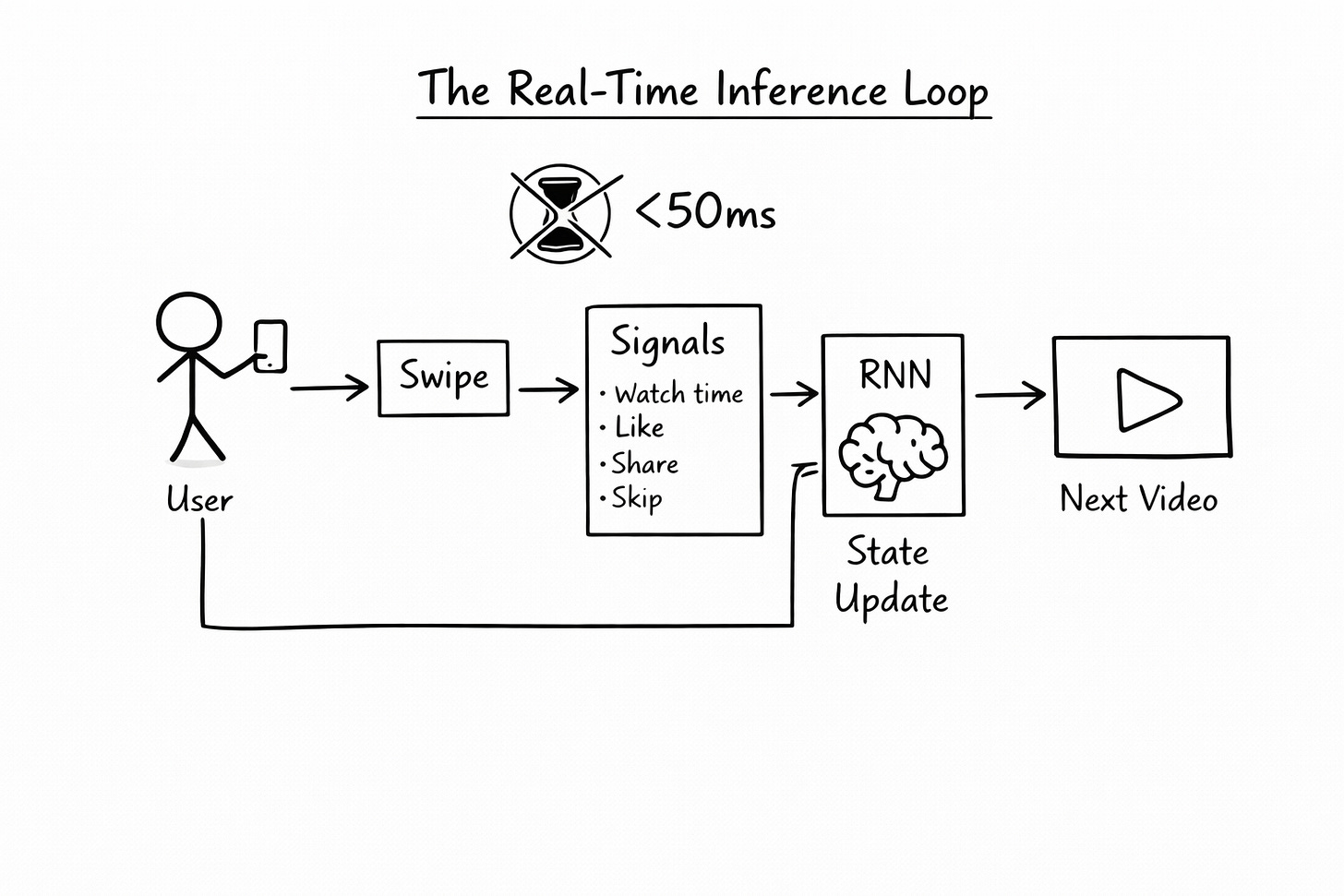

Before the ML engineers write a single line of code, the AI PM must define the constraints. If you fail here, the model will be a theoretical success and a production disaster.

There are three critical requirements.

First is latency. The model must run online inference. When a user swipes, the RNN must update its state and fetch the next video in under 50 milliseconds. If inference takes 200ms, the user sees a loading spinner. On TikTok, a loading spinner is death.

Second is defining a session. Is a session defined by 30 minutes of inactivity? Or is it defined by a hard app close? This seems like a small decision but it fundamentally changes how the model trains. Usually, a 30-minute inactivity threshold works best.

Third is signal weighting. The PM must define what inputs matter and how much they matter. A like is an explicit signal. A video completion is an implicit signal. The model must ingest both. But watch-time percentage should be weighted highest because it is the most honest signal. People lie with likes. They do not pay attention.

These are PM decisions, not engineering decisions. If you get them wrong, no amount of model tuning will save you.

How the RNN Actually Works Under the Hood

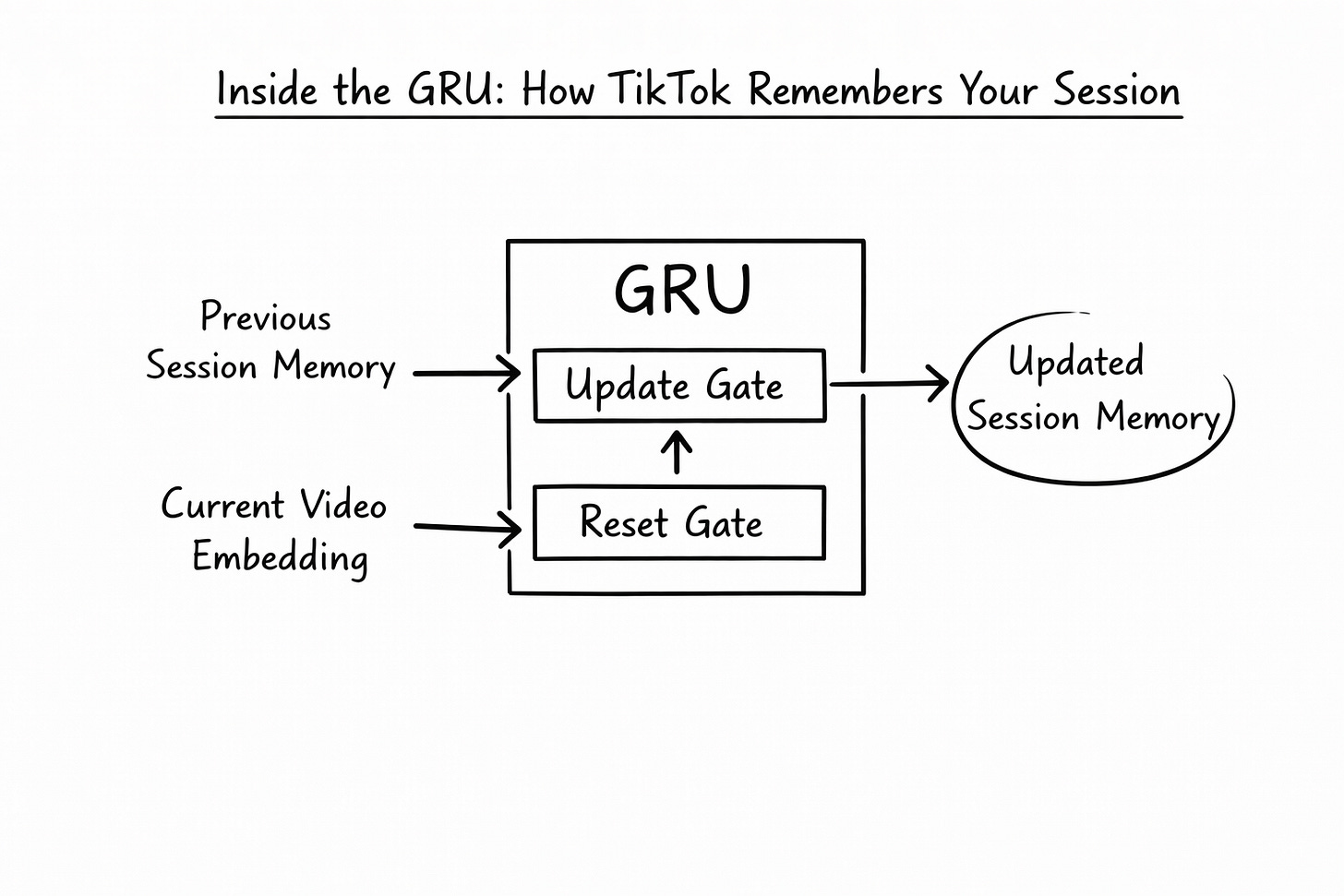

Let us look inside the GRU, the engine of the RNN session model.

When a user interacts with a video, that video is converted into an embedding vector. Think of the embedding as a numerical fingerprint that captures everything about that video in a compact format.

The core magic of the GRU is its Hidden State. This hidden state acts as the memory of the current session up to the current point in time.

As the user swipes to a new video, the GRU updates its memory. It uses two internal mechanisms.

The Update Gate decides how much of the past session to remember. If the user’s recent behaviour is consistent, the gate stays mostly open, preserving the session memory.

The Reset Gate decides how much of the past to forget because the user’s intent has shifted. If the user suddenly jumped from comedy to cooking, the reset gate activates and says “Forget the comedy context, something new is happening.”

The formula for the memory update looks like this.

New Memory = (1 - Update Gate) X Old Memory + Update Gate X Candidate Memory

In plain language, the model blends the old session memory with the new signal based on how much the user’s intent has shifted. This blending happens after every single swipe. The memory is always fresh.

How the Model is Trained

You cannot train this like a normal classification problem. The video catalogue has millions of items. You cannot ask the model to predict the exact video.

Instead, we use a technique called Bayesian Personalised Ranking or BPR.

The idea is simple but powerful. Instead of predicting the exact next video, you train the model to rank the actual next video the user watched higher than a randomly sampled video the user did not watch.

You take the video that the user actually watched next. You call it the positive item. You randomly sample a video the user did not watch. You call it the negative item. Then you train the model so that the score for the positive item is always higher than the score for the negative item.

Over millions of such comparisons, the model learns what sequences of behaviour lead to what kinds of content. It learns the grammar of user intent.

Measuring Model Success: Offline and Online

You need two completely different sets of metrics here. One for the engineers during training. One for the business during A/B testing.

For offline ML metrics, you track Recall at K. Out of the top 20 videos the RNN predicted, was the actual next video the user watched in that list? If yes, the model is doing its job.

You also track Mean Reciprocal Rank. It is not enough to be in the top 20. Was it ranked number 1 or number 19? MRR heavily penalises the model if the correct prediction is buried at the bottom of the list.

For online product metrics, you track Next-Click CTR. Does the user actually watch the immediately next video served by the RNN? You also track Session Length Extension. Does the RNN variant increase the average session length compared to the control group?

If Recall at 20 is high but Session Length is flat, your model is technically accurate but not creating the Rabbit Hole effect. Both metrics must move together. This is where AI PMs earn their salary. Bridging the gap between what the ML team optimises and what the business actually needs.

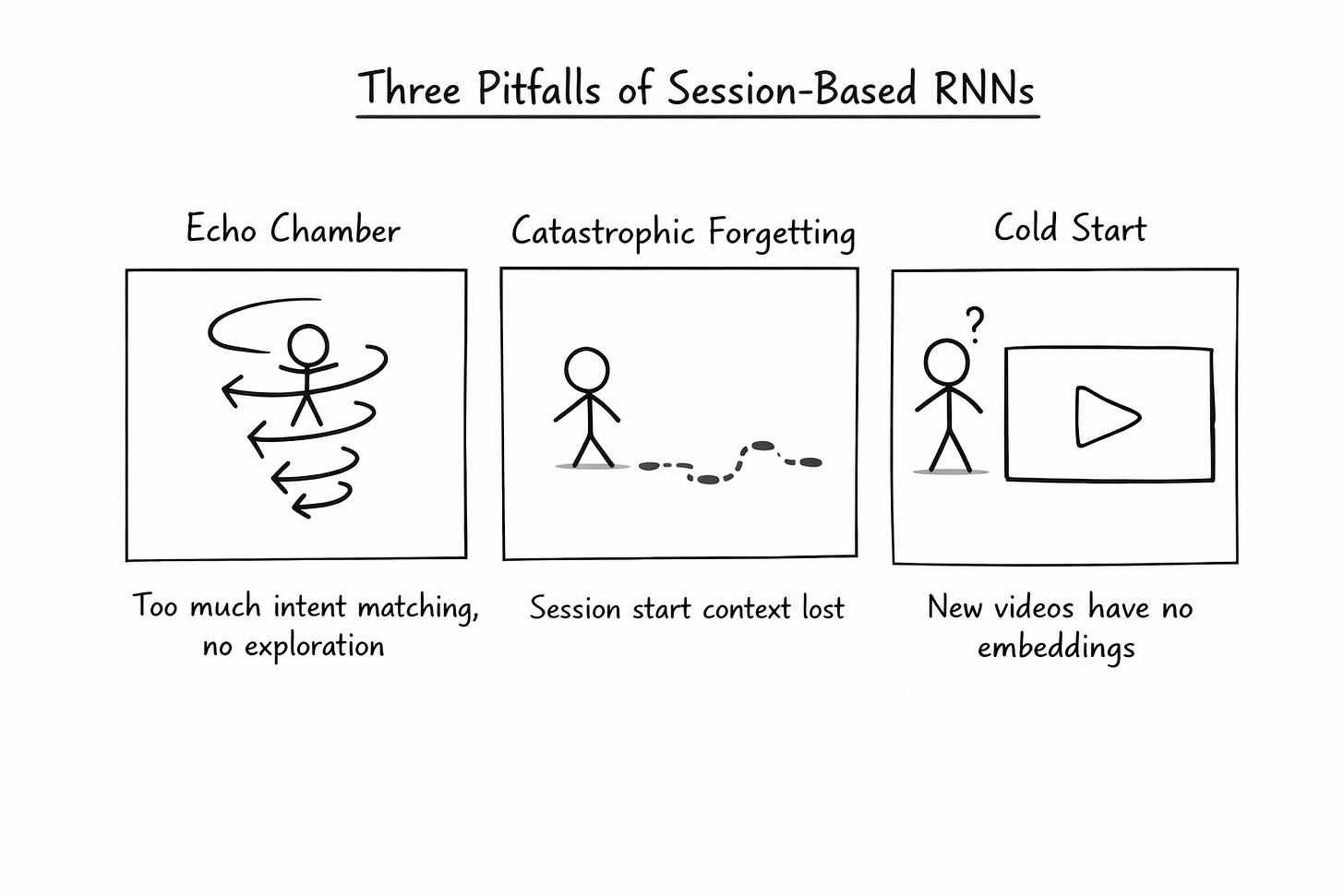

The Three Pitfalls That Will Destroy Your Product

If you implement this blindly, you will destroy your user experience. There are three pitfalls every AI PM must guard against.

The first is the Echo Chamber problem. RNNs are almost too good at detecting immediate intent. If a user pauses on a sad video for two seconds too long, the RNN might plunge them into a depressive rabbit hole, serving nothing but sad content for the rest of the session. The solution is to inject random exploration videos using multi-armed bandits. You intentionally break the sequence to test for new intents. This is a PM decision, not a model decision. TikTok’s own algorithm does this. It deliberately injects novelty and diversity into the feed to prevent monotony and to protect users from harmful content spirals.

The second is Catastrophic Forgetting. Standard RNNs heavily weight the most recent clicks and forget the beginning of the session. If a session is 100 swipes long, the intent from swipe 10 might still be relevant. But the GRU might have forgotten it entirely. This is why some teams use attention mechanisms on top of GRUs, allowing the model to look back at any point in the session, not just the most recent swipes.

The third is Cold Start on New Items. The RNN is great at handling new users because it builds understanding from the very first swipe. But it struggles with brand-new videos that have no embeddings yet. You still need content-based filtering to push new creator videos into the system until they accumulate enough interaction data. TikTok solves this through a tiered distribution system. Every new video is first shown to a small, highly targeted test group. If engagement is strong within that group, the video gets pushed to a larger audience. This is how a creator with 200 followers can wake up with 10 million views.

AI Product Management is not about throwing LLMs at every problem. It is about understanding the structural reality of your user data and connecting it to the business's strategic goals.

If this article changed how you think about recommendation systems and product strategy, you will find much more depth in our AI PM course. We cover system design for recommendations, RAG architectures, AI metrics, agentic systems, and real interview questions from top companies.

Check our highest-rated AI PM course (Including AI PM Interview Preparation )· 4.9/5 · 600+ enrollments → See testimonials and course details

About Author

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. Weekly Live Webinars/MasterClass ( Here )

Technomanagers is a reader-supported publication. To receive new posts and support my work, consider becoming a free or paid subscriber.