Memory in AI - Part 1

The retention problem hiding inside every AI product

Everyone talks about AI features these days.

But very few product teams understand what actually makes AI feel intelligent.

It is not the model. It is not the UI. It is not even the speed.

It is memory.

Right now, most AI products operate on a single memory variable.

What the AI knows = What’s in the current conversation window

The session ends. The knowledge disappears. The next conversation starts from zero.

To understand why, we need to examine what determines whether an AI product feels magical or mediocre.

First Principle Breakdown of AI Usefulness…

AI product value depends on three multiplied factors.

Context × Reasoning × Memory = Perceived Intelligence

Most teams obsess over Reasoning. They chase better models, bigger parameters, faster inference.

But there is a problem. Reasoning without memory is like hiring a brilliant employee who forgets everything at the end of every day.

You get a fast answer. But you never get a useful relationship.

This is the gap that separate AI tools people demo from AI tools people actually rely on. Because an AI product with well-designed memory compounds. The longer the user stays, the more context the AI holds. The more context the AI holds, the more useful it becomes. The more useful it becomes, the harder it is to leave, basically you are increasing the switching cost.

So what does it mean by Memory in AI?

Before we go further, let’s understand what memory is because it is being used very loosely nowadays.

When we talk about memory in AI systems, we are really talking about one question: What does the model know, and when does it know it?

There are four distinct types of memory in LLM-based products. Each works differently. Each has a different cost and a different product implication.

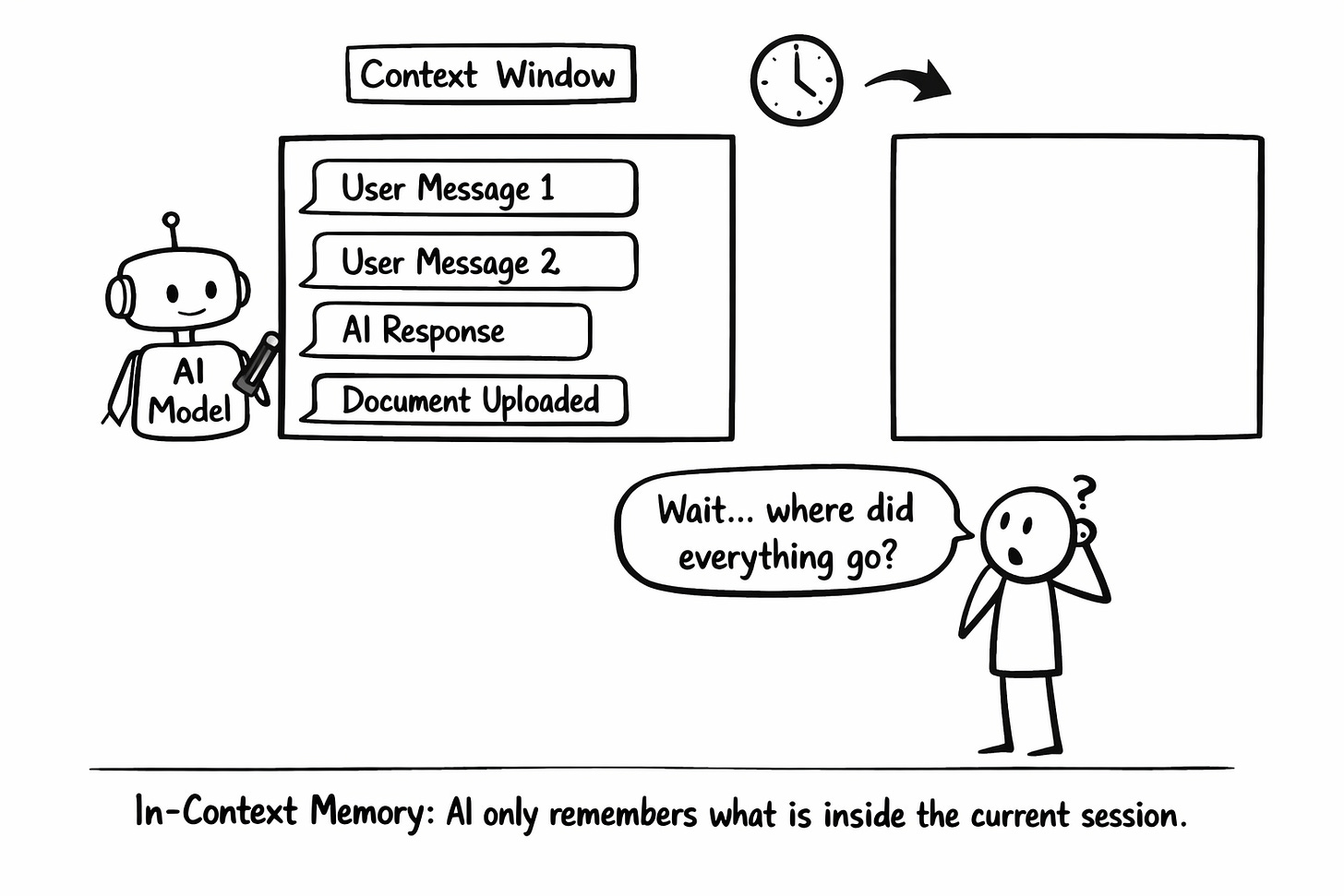

In-Context Memory

This is the most immediate form of memory. The AI reads everything in the active session like your messages, its responses, any documents you've pasted and uses that to respond. When the session ends, it's gone.

Context windows have token limits. You can't fit infinite history into them. And the longer the context, the more expensive the inference.

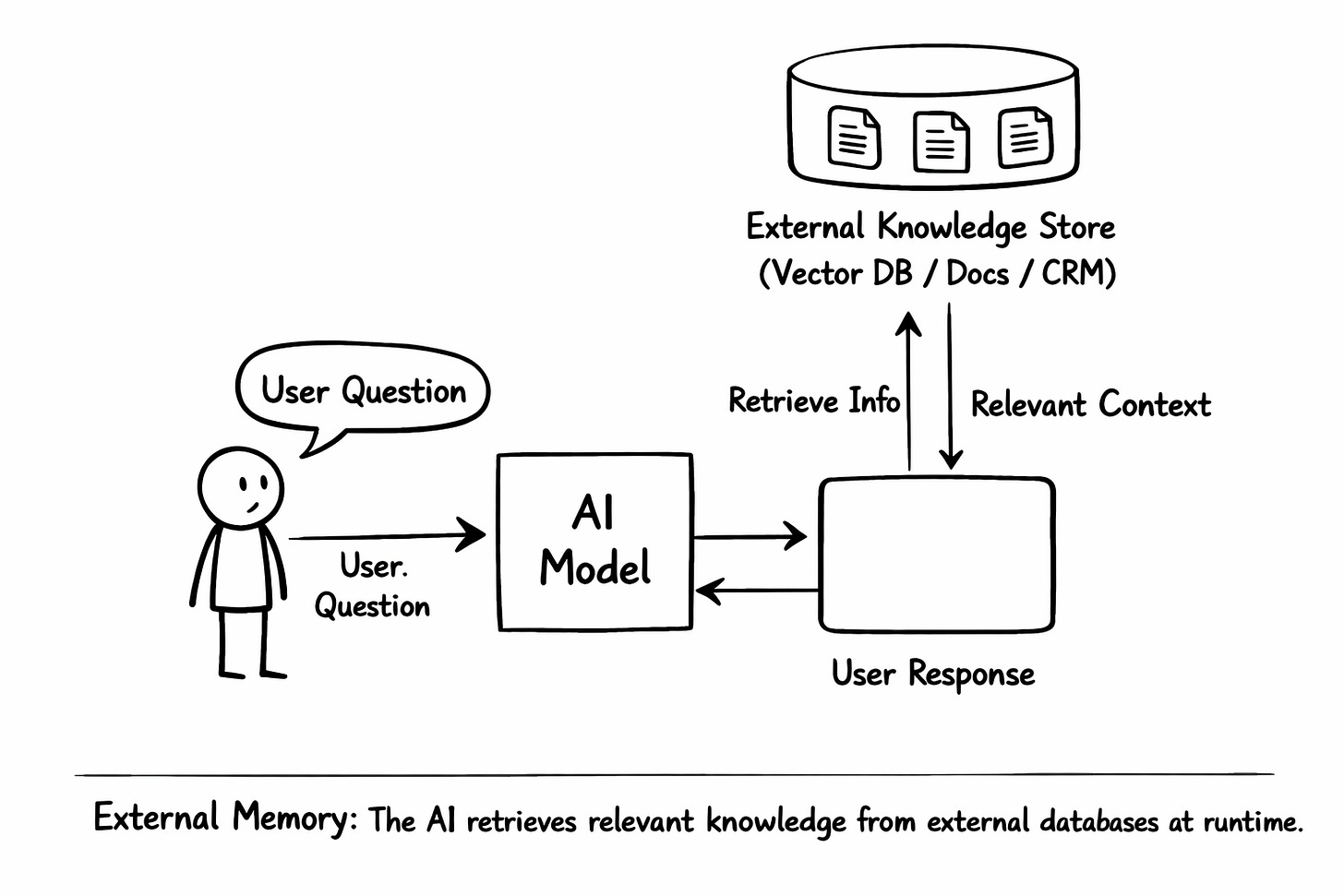

External / Retrieval Memory

External memory is like a database outside the model that gets queried at runtime and injected into the context.

The AI doesn’t store anything itself. Instead, relevant information is retrieved from an external store like a vector database, a CRM, a document store and inserted into the prompt before the model responds.

Here the response quality is determined by the retrieval quality.

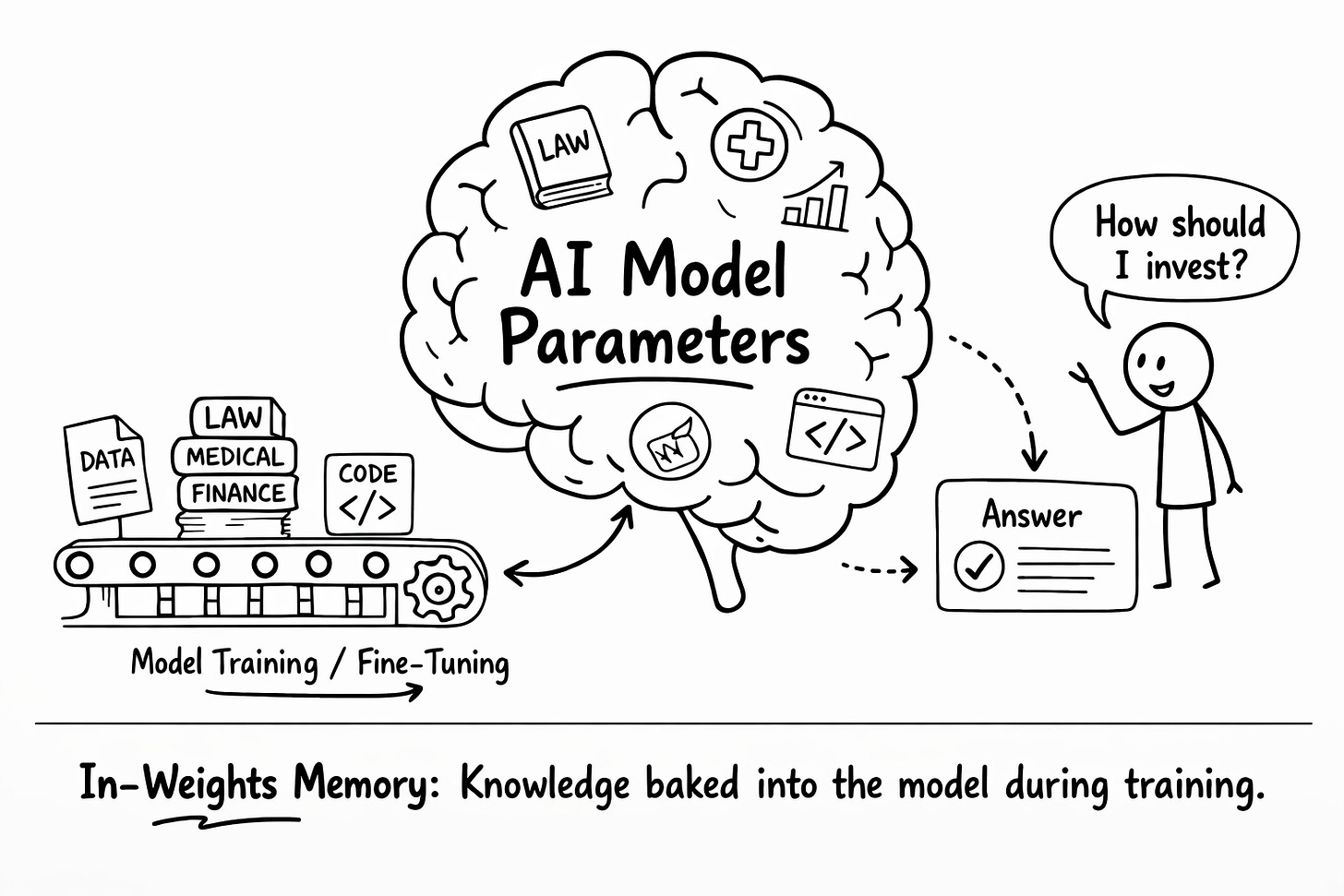

In-Weights Memory

This is the kind of memory where the knowledge is baked into the model during training or fine-tuning. It lives in the billions of parameters of the model itself. You can’t “update” it without retraining and retraining is expensive.

Fine-tuning makes sense when your domain has a consistent, learnable structure .i.e legal, medical, financial. It's a long-term investment, not a quick feature. Don't reach for fine-tuning when retrieval will do the job.

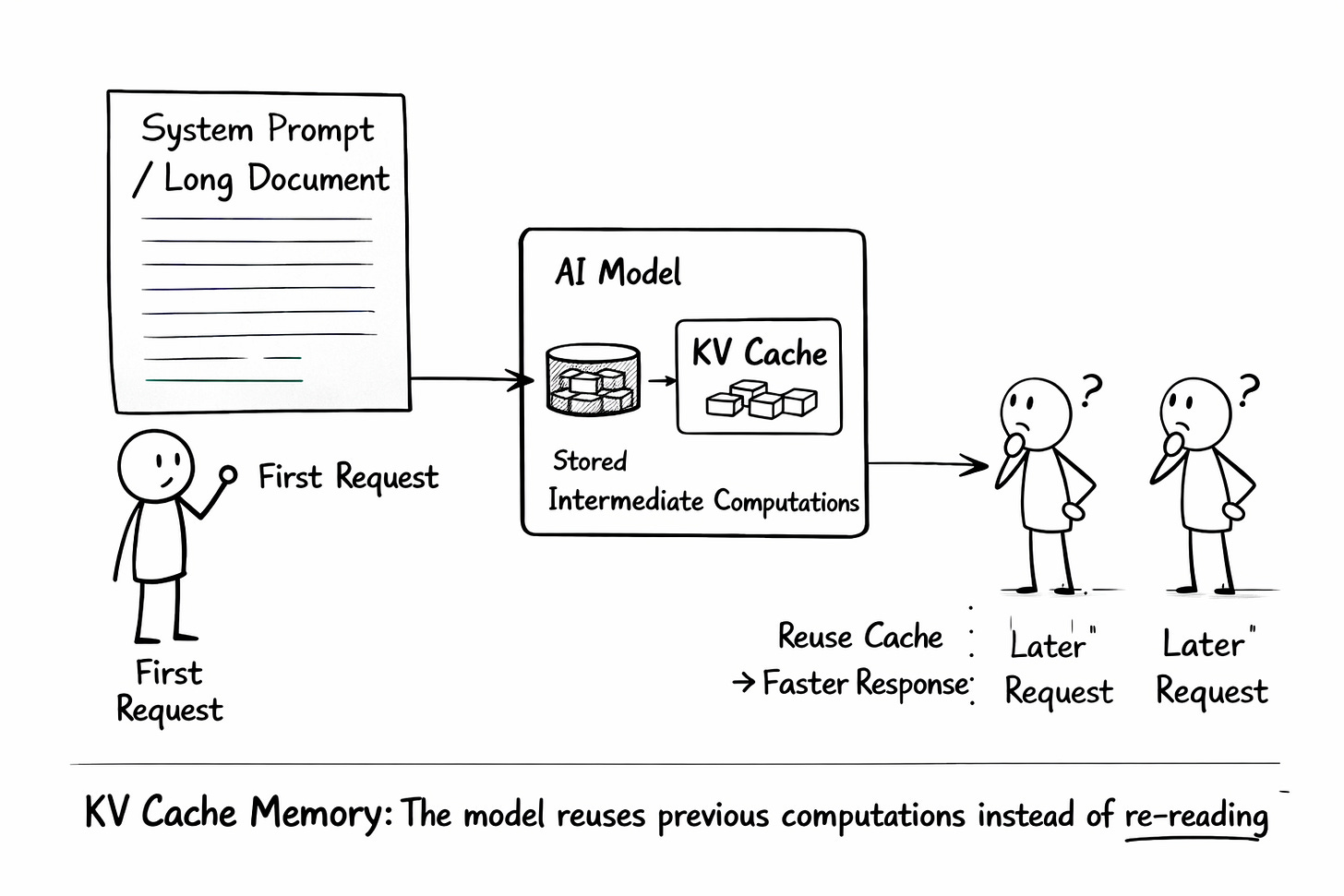

In-Cache / KV Cache Memory

This is the most technical type and the most invisible to users. When the same system prompt or document is used repeatedly, the model's intermediate computations (Key-Value cache) can be stored and reused. The model doesn't re-read it from scratch every time.

The Goldfish Problem

Let’s look at what happens when a product team ignores these memory types and relies entirely on a blank-slate session, the Goldfish Problem.

Imagine a user spends twenty minutes interacting with an AI financial planner. They input their salary, their risk tolerance, and their goal to buy a house in three years. The AI gives them a brilliant, personalised breakdown.

The next day, the user logs back in and asks, How much should I allocate to index funds this month?

The AI responds: I can help with that! To get started, what is your current salary and risk tolerance?

The magic is instantly broken. The user realizes they aren’t talking to an intelligent assistant; they are talking to a calculator that resets every time the screen turns off. That right there is a churn event.

So the Memory Gap Is a Retention Gap

If this article raised more questions than it answered — that is a good sign. It means you can see the gap.

If this article made you think differently about AI products, Part 2 and Part 3 will go deeper into memory architecture.

And if you want the full structured path — from AI foundations ( ML Algorithms, Systems) to Vibe Coding, RAG, Evals, AI Strategy, AI Pricing to interview prep — in one place built for PMs, that is what our course covers.

Highest rated AI PM course · 4.9/5 · 500+ enrollments → See testimonials and course details 60% OFF for a limited time — Code: NYE26

About Author

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. For more, check out my AI Product Management Course, PM Interview Mastery Course, Cracking Strategy, and other Resources