Solving the GenAI Latency Problem

The 1-Line Prompting Mistake Killing Your AI's Unit Economics

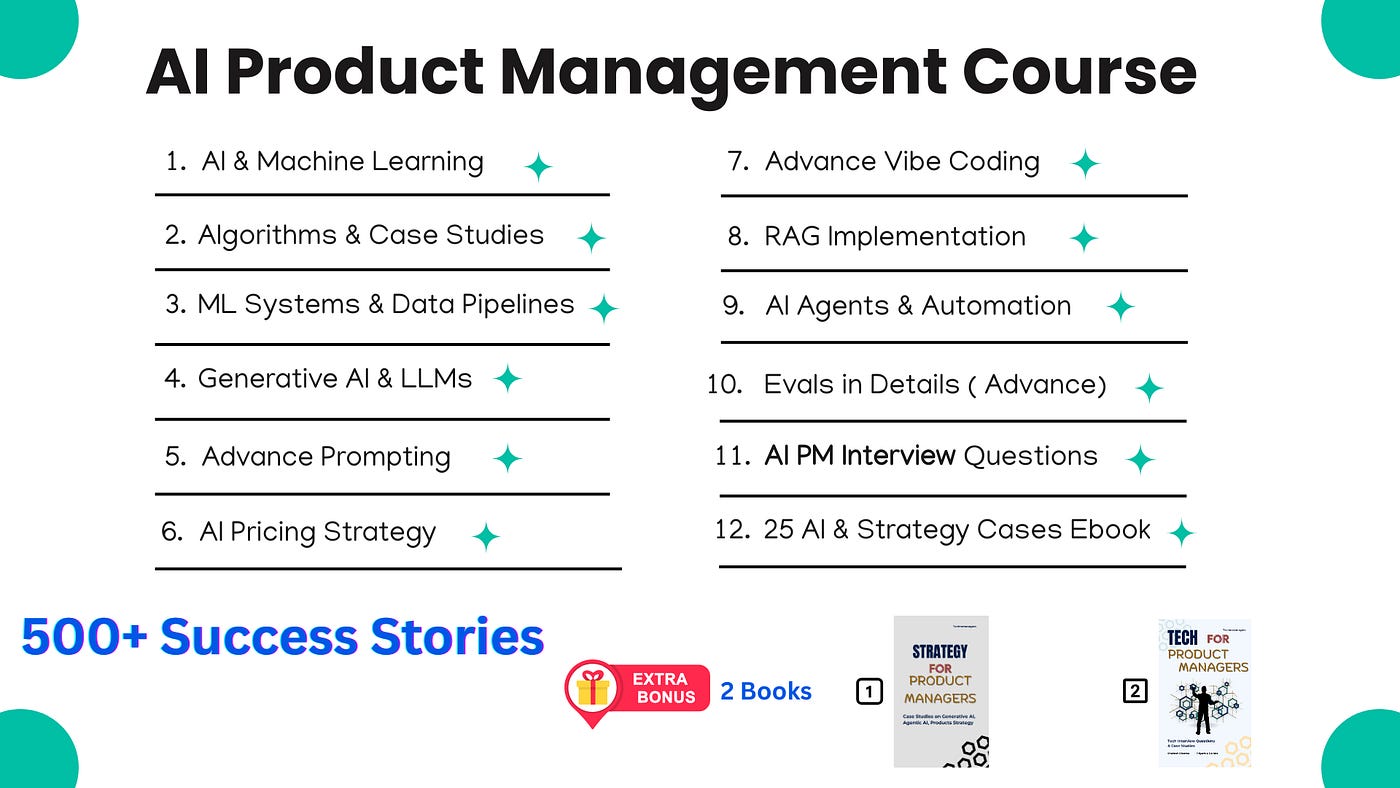

Before we jump into the artcile, you can find out the Top Rated ( 4.9 / 5 ) Course - 500+ Success Stories.

For New Year, we are giving EXTRA 60% OFF on our AI PM Flagship Course for very limited Time

Coupon Code — NYE26 , Course Link — Click Here

Now back to the article

Imagine you are a Senior Product Manager at Amazon.

You are building a new GenAI feature, an interactive shopping assistant, right on the product details page.

A customer is looking at a complex, high-end DSLR camera.

To make the AI truly helpful, you load the camera’s entire 50-page technical manual, hundreds of customer reviews, and your rigid system instructions into the LLM’s context window.

The user types: Is this camera weather-sealed?

Spinning loading wheel. —> 6 seconds later —> Yes.

Then the user asks: What’s the return policy on this?

Spinning loading wheel —> 8 seconds later —> You have 30 days.

You realise you have a massive problem.

The latency is completely ruining the B2C customer experience—shoppers are abandoning the chat because it’s too slow.

Also, your compute costs are destroying the feature’s unit economics.

Why? Because for every single question the user asks, the LLM is re-reading and re-processing that massive 50-page manual from scratch.

Today, we are doing a First Principle Breakdown of the infrastructure solution to this exact problem: Prompt Caching

What Prompt Caching is NOT

Let’s clear up a common misconception. When you tell an engineer you want to cache the responses, they might think of traditional output caching (like caching a standard SQL database query).

Output caching means storing the final generated response.

If User A asks What is the capital of France? and User B asks the same thing, you just serve the cached answer.

But in our scenario, users are asking different dynamic questions about the same static product manual. Output caching won’t help us here.

Prompt caching tackles the input bottleneck. It caches the prompt itself.

The First Principles of Prompt Caching

To understand why this saves your latency and cost, we need to look under the hood at how Transformers process text.

When you send your massive prompt to an LLM, before it can generate a single token of its answer, it goes through the prefill phase.

For every single token in your 50-page manual, the model computes Key-Value (KV) pairs across dozens of transformer layers.

Think of these KV pairs as the model’s internal mathematical understanding of your prompt—how every word relates to every other word.

Computing these KV pairs for thousands of tokens requires millions of operations. It is computationally expensive and painfully slow.

Prompt caching simply stores these precomputed KV pairs.

When your Amazon shopper asks their second question, the system recognises the product manual, retrieves the cached mathematical understanding, and only processes the few tokens of the new question.

The Prefix Matching Rule: Structuring Your Prompt

For this to work, the LLM relies on Prefix Matching.

The cache system matches your prompt token-by-token from the absolute beginning. The exact moment it encounters a token that differs from what is cached, caching stops, and expensive normal processing takes over.

This means your prompt structure directly dictates your unit economics.

If you are building this Amazon assistant, you must structure your prompt to put all the static content first:

System Instructions (You are a helpful Amazon shopping assistant.)

Few-Shot Examples (How to format the output)

The 50-Page Product Manual (The static context)

The User’s Question (The dynamic content: What’s the return policy?)

If you make the mistake of putting the user’s dynamic question at the top of the prompt, the cache will fail immediately on the very first differing token.

The LLM will have to recalculate the KV pairs for the entire 50-page manual again. Always put the dynamic components at the very end.

Key Takeaways while Execution

When you sit down with your engineering team to implement this, keep these constraints in mind:

Minimum K Tokens: Prompt caching isn’t for simple queries. You typically need at least K tokens to initiate caching. Below that threshold, the overhead of managing the cache exceeds the compute savings.

Time-to-Live (TTL): Caches do not last forever. Providers usually clear them after 5 to 10 minutes to keep data fresh and manage memory (though some architectures allow up to 24 hours).

Implicit vs. Explicit API Calls: Some model providers handle this prefix matching automatically. Others require your engineers to explicitly mark which parts of your prompt should be cached in your API calls.

By leveraging prompt caching, we can turn a slow, expensive AI feature into a highly responsive, cost-effective product that your customers actually want to use.

If you like this article, you will absolutely love our AI Product Management Course ( having real AI PM Interview Questions from Google, OpenAI, Anthropic, Amazon, Nvidia, Booking etc) - ( 35+ Videos ) & ( Extra 25+ Real Case studies as well )

About Author

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. For more, check out my AI Product Management Course, PM Interview Mastery Course, Cracking Strategy, and other Resources