Why Nvidia wants this badly?

Future of Computing

Before we deep dive into the future of Computing, please find out our AI Eval Series

Part 1: We created a Golden Dataset

Part 2: Deterministic Evals

Part 3: LLM as Judge

Part 4: Problem of Retrieval and Generation

When we think about the AI infrastructure, the first name that comes to mind is Nvidia or GPUs.

For the past few years, Nvidia has provided the hardware that makes modern AI possible. However, the world of AI is changing, and a recent deal involving Groq shows us exactly where the future is headed.

Nvidia is spending roughly $20 billion to acquire the assets and the engineering team of Groq. This is not a traditional company purchase. It is a strategic move to own a specific type of technology and the people who built it.

To understand why this deal matters, we must look at AI from first principles.

Two Stages of AI: Training vs Inference

Every AI system has two distinct stages

Training: You feed a model massive amounts of data so it can learn patterns. This requires enormous computing power and months of time. Currently, Nvidia is the leader here because its chips can handle massive data sets all at once.

Inference: When you type a prompt into a chatbot, and it replies, that is inference. It involves using a model that has already been trained.

While training was the focus for the last two years, the future of the business is inference. Training happens once, but inference happens every time a human or a machine uses AI.

By 2028, the demand for running these models will far exceed the demand for building them.

The Limitation of Current Architecture

Nvidia’s chips are designed to be general-purpose ( GPUs ). They are excellent at many different tasks, but they have a specific limitation when it comes to speed.

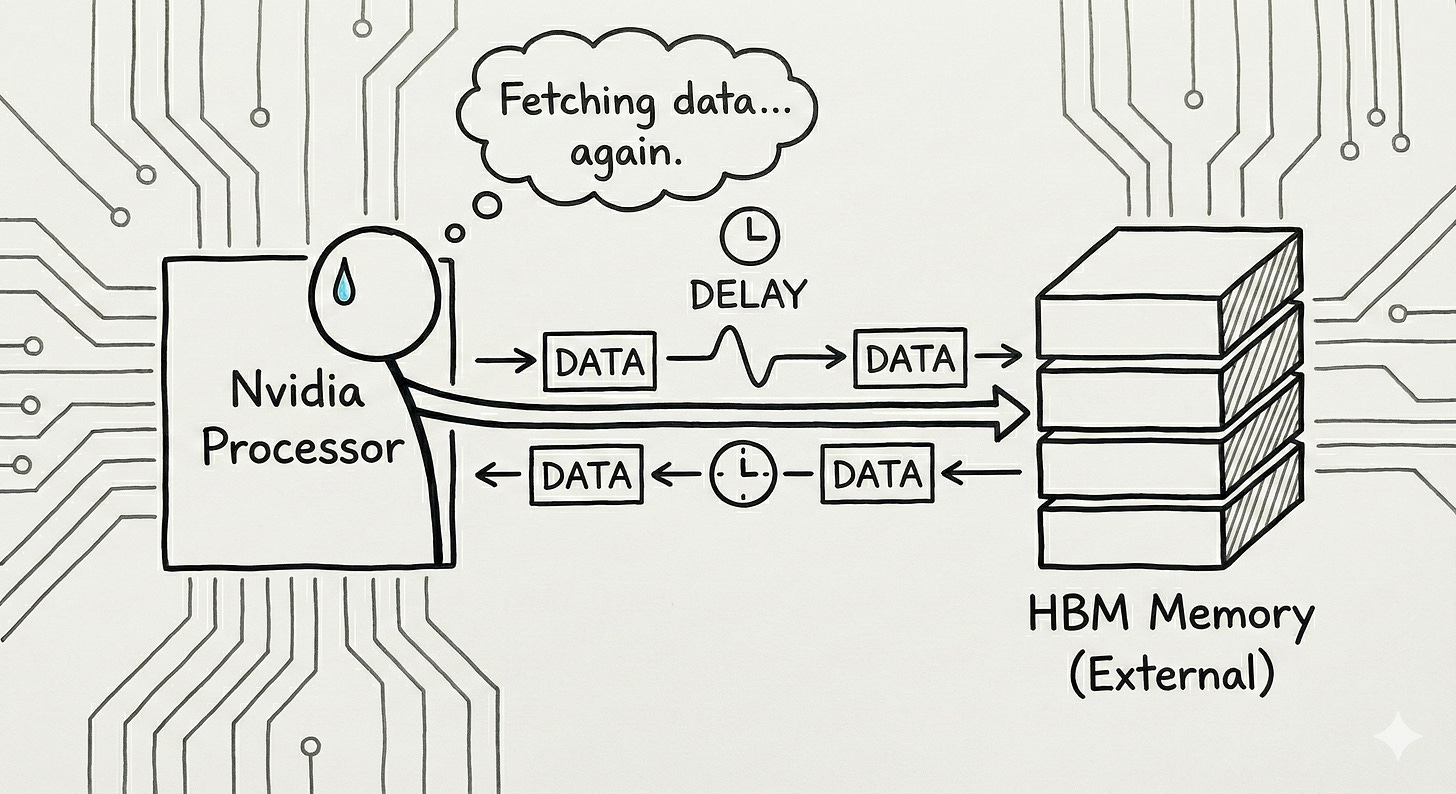

Nvidia chips use a type of memory called High Bandwidth Memory (HBM). This memory sits next to the processor. Every time the chip needs to calculate something, it must fetch data from that memory, process it, and send it back. Even though this happens fast, there is still a physical delay.

Furthermore, Nvidia chips use hardware schedulers. These are like traffic controllers that manage how data moves inside the chip while it is working. This makes the chip flexible, but it also makes the processing time unpredictable.

For a chatbot, a small delay is fine. For a robot that needs to move in real-time, any delay is a failure.

The Groq Difference

Groq approached the problem differently. They built a Language Processing Unit (LPU). Instead of using external memory, they use Static RAM (SRAM), which is built directly onto the processing chip itself.

In first principle terms, if the data does not have to travel to an outside memory source, it can be processed much faster. Groq also removed the hardware traffic controllers. Instead, their software plans the exact path of every piece of data before the work even starts. This is called deterministic computing.

The result is extreme speed.

Why Nvidia Wants This??

Nvidia is making this move for three main reasons:

The Shift to Inference: As AI becomes a consumer product, the cost of running it becomes the most important factor. Groq’s technology allows for faster and cheaper inference.

The Robotics Future: Future technologies like humanoid robots and voice assistants cannot have delays. They need instant responses to interact with the physical world. Groq’s technology provides that instant response.

Removing Competition: The founder of Groq, Jonathan Ross, previously designed Google’s AI chips. He is one of the few people in the world who knows how to build a real alternative to Nvidia. By hiring him and his team, Nvidia brings that expertise in-house.

Nvidia structured this as an asset acquisition and a hiring move rather than a full merger.

This is a smart business move to avoid government interference. By not buying the entire legal entity of Groq, Nvidia can integrate the technology and the talent immediately without waiting years for regulators to approve a monopoly.

The Future of Computing

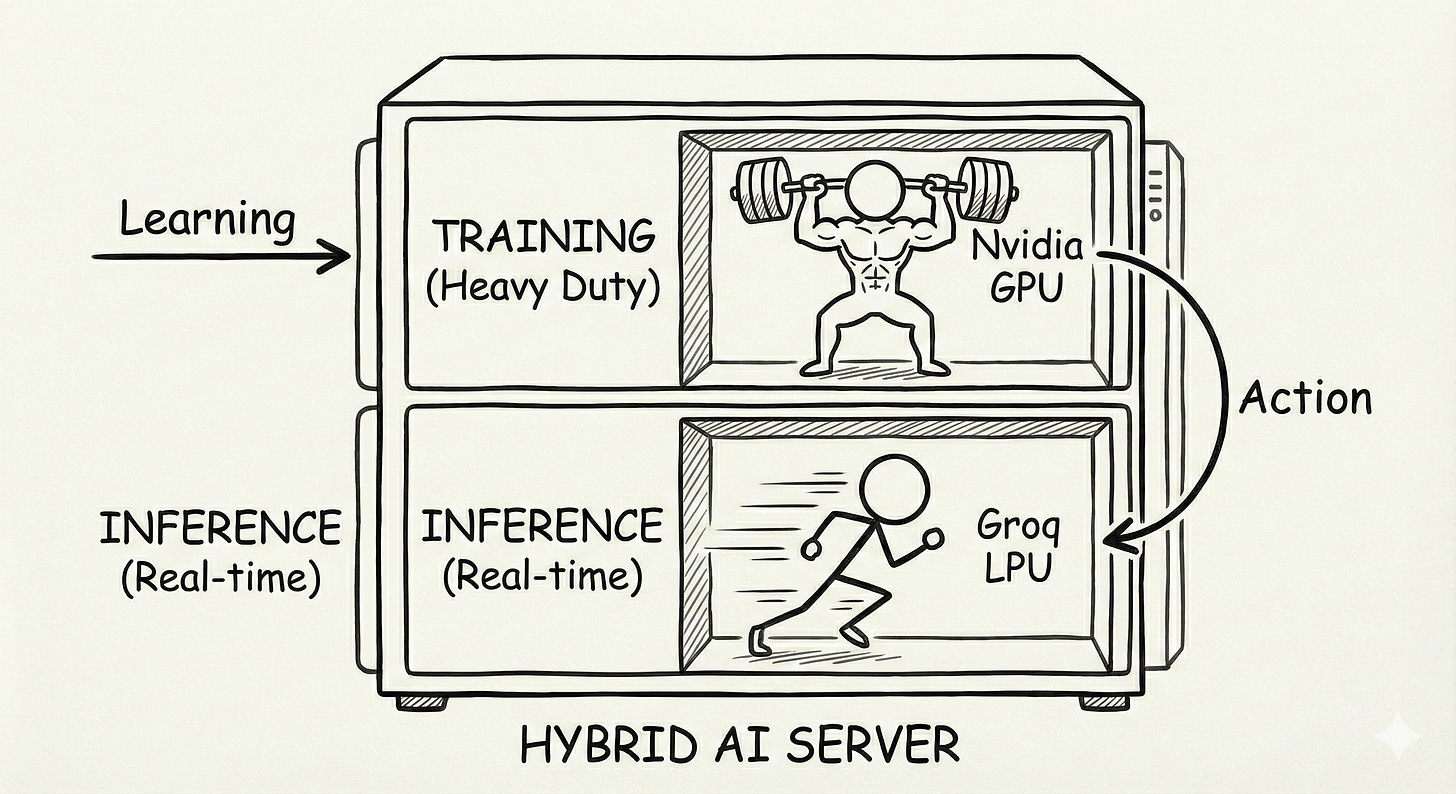

The future of AI infrastructure will not rely on a single type of chip. Instead, we are moving toward a hybrid system.

Computers will use standard Nvidia GPUs for the heavy work of training and complex processing. They will use the newly acquired Groq technology for the fast work of generating real-time responses.

Nvidia’s high-level strategy is to own both sides of this equation. They want to be the company you use to build the AI, and the company you use to run the AI. By acquiring Groq’s assets, they have effectively closed the gap in their own architecture.

If you like this article, you will absolutely love our Course ( having real AI PM Interview Questions ( Details Below )

Most Detailed AI Product Management Course ( Along with AI PM Interview Questions )

For New Year, we are giving EXTRA 60% OFF on our AI PM Flagship Course for very limited Time

Coupon Code — NYE26 , Course Link - Click Here

Shailesh Sharma! I help PMs and business leaders excel in Product, Strategy, and AI using First Principles Thinking. For more, check out my AI Product Management Course, PM Interview Mastery Course, Cracking Strategy, and other Resources

Great technical piece - had no idea about some of these latency/speed issues.

I wrote a bull/bear analysis of Nvidia with a regulatory angle (export controls) - interested in your thoughts!

https://substack.com/home/post/p-185220095